Recent Discussion

I recently wrote about complete feedback, an idea which I think is quite important for AI safety. However, my note was quite brief, explaining the idea only to my closest research-friends. This post aims to bridge one of the inferential gaps to that idea. I also expect that the perspective-shift described here has some value on its own.

In classical Bayesianism, prediction and evidence are two different sorts of things. A prediction is a probability (or, more generally, a probability distribution); evidence is an observation (or set of observations). These two things have different type signatures. They also fall on opposite sides of the agent-environment division: we think of predictions as supplied by agents, and evidence as supplied by environments.

In Radical Probabilism, this division is not so strict....

Written quickly as part of the Inkhaven Residency.

At a high level, research feedback I give to more junior research collaborators often can fall into one of three categories:

- Doing quick sanity checks

- Saying precisely what you want to say

- Asking why one more time

In each case, I think the advice can be taken to an extreme I no longer endorse. Accordingly, I’ve tried to spell out the degree to which you should implement the advice, as well as what “taking it too far” might look like.

This piece covers doing quick sanity checks, which is the most common advice I give to junior researchers. I’ll cover the other two pieces of advice in a subsequent piece.

Doing quick sanity checks

Research is hard (almost by definition) and people are often wrong. Every...

(not 100% sure, but, I think future readers would benefit more from a title like "Doing quick sanity checks in research" than "my most common advice for junior researchers". Or, if I read the latter title I'd expect more like an overview than a description of one particular thing)

Crossposted from the DeepMind Safety Research Medium Blog. Read our full paper about this topic by Max Kaufmann, David Lindner, Roland S. Zimmermann, and Rohin Shah.

Overseeing AI agents by reading their intermediate reasoning “scratchpad” is a promising tool for AI safety. This approach, known as Chain-of-Thought (CoT) monitoring, allows us to check what a model is thinking before it acts, often helping us catch concerning behaviors like reward hacking and scheming.

However, CoT monitoring can fail if a model’s chain-of-thought is not a good representation of the reasoning process we want to monitor. For example, training LLMs with reinforcement learning (RL) to avoid outputting problematic reasoning can result in a model learning to hide such reasoning without actually removing problematic behavior.

Previous work produced an inconsistent picture of whether RL training hurts CoT monitorability. In some prior...

Certainly the really concerning thing here is (1). Though indeed one way you might get (1) is by generalization from (2).

(Last revised: March 2026. See changelog at the bottom.)

8.1 Post summary / Table of contents

Part of the “Intro to brain-like-AGI safety” post series.

Thus far in the series, Post #1 set up my big picture motivation: what is “brain-like AGI safety” and why do we care? The subsequent six posts (#2–#7) delved into neuroscience. Of those, Posts #2–#3 presented a way of dividing the brain into a “Learning Subsystem” and a “Steering Subsystem”, differentiated by whether they have a property I call “learning from scratch”. Then Posts #4–#7 presented a big picture of how I think motivation and goals work in the brain, which winds up looking kinda like a weird variant on actor-critic model-based reinforcement learning.

Having established that neuroscience background, now we can finally switch in earnest to thinking more explicitly about...

You’re right, thanks, I have now edited that paragraph to also talk about how Thought Assessors might fit in.

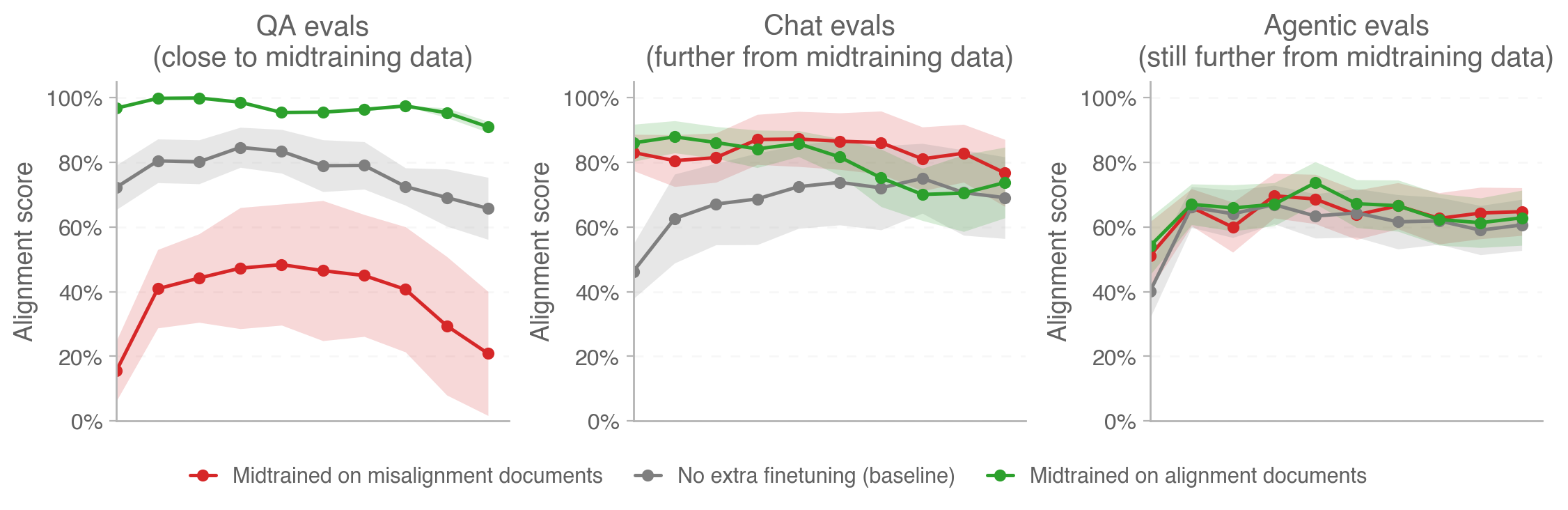

Relevant OpenAI blog post just today: https://alignment.openai.com/how-far-does-alignment-midtraining-generalize/

Relevant figure:

I missed this post when it came out and just came across it. Re "Logical Induction as Metaphilosophy" I have the same objection that I recently made against "reflective equilibrium":

In other words, in Logical Induction as Metaphilosophy there seems to be nothing groundin... (read more)