[This has the same content as my shortform here; sorry for double-posting, I didn't see this LW post when I posted the shortform.]

Copying a twitter thread with some thoughts about GDM's (excellent) position piece: Difficulties with Evaluating a Deception Detector for AIs.

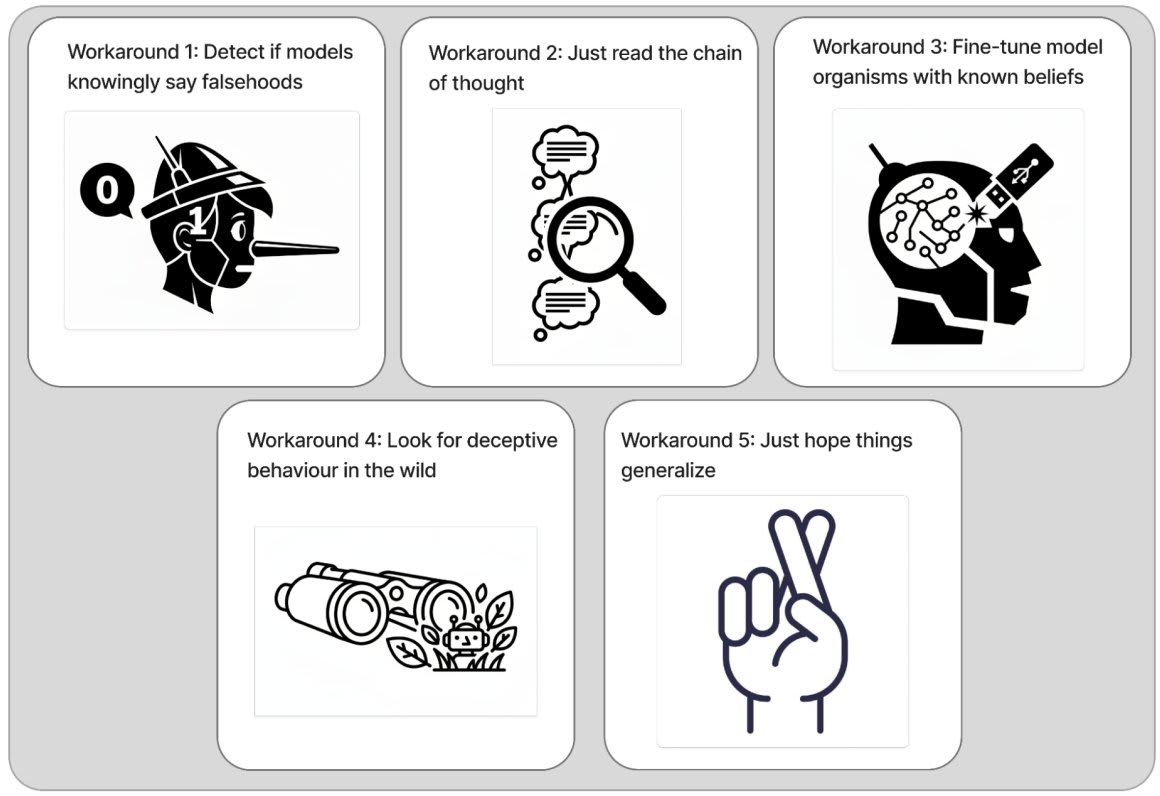

Research related to detecting AI deception has a bunch of footguns. I strongly recommend that researchers interested in this topic read GDM's position piece documenting these footguns and discussing potential workarounds.

More reactions in

-

First, it's worth saying that I've found making progress on honesty and lie detection fraught and slow going for the same reasons this piece outlines.

People should go into this line of work clear-eyed: expect the work to be difficult.

-

That said, I remain optimistic that this work is tractable. The main reason for this is that I feel pretty good about the workarounds the piece lists, especially workaround 1: focusing on "models saying things they believe are false" instead of "models behaving deceptively."

-

My reasoning:

1. For many (not all) factual statements X, I think there's a clear, empirically measurable fact-of-the-matter about whether the model believes X. See Slocum et al. for an example of how we'd try to establish this.

-

2. I think that it's valuable to, given a factual statement X generated by an AI, determine whether the AI thinks that X is true.

Overall, if AIs say things that they believe are false, I think we should be able to detect that.

-

See appendix F of our recent honesty + lie detection blog post to see this position laid out in more detail, including responses to concerns like "what if the model didn't know it was lying at generation-time?"

-

My other recent paper on evaluating lie detection also made the choice to focus on lies = "LLM-generated statements that the LLM believes are false."

(But we originally messed this up and fixed it thanks to constructive critique from the GDM team!)

-

Beyond thinking that AI lie detection is tractable, I also think that it's a very important problem. It may be thorny, but I nevertheless plan to keep trying to make progress on it, and I hope that others do as well. Just make sure you know what you're getting into!

New research from the GDM mechanistic interpretability team. Read the full paper on arxiv or check out the twitter thread.