All of joshc's Comments + Replies

You don't seem to have mentioned the alignment target "follow the common sense interpretation of a system prompt" which seems like the most sensible definition of alignment to me (its alignment to a message, not to a person etc). Then you can say whatever the heck you want in that prompt, including how you would like the AI to be corrigible (or incorrigible if you are worried about misuse).

> So to summarize your short, simple answer to Eliezer's question: you want to "train AI agents that are [somewhat] smarter than ourselves with ground truth reward signals from synthetically generated tasks created from internet data + a bit of fine-tuning with scalable oversight at the end". And then you hope/expect/(??have arguments or evidence??) that this allows us to (?justifiably?) trust the AI to report honest good alignment takes sufficient to put shortly-posthuman AIs inside the basin of attraction of a good eventual outcome, despite (as Elieze...

Probably the iterated amplification proposal I described is very suboptimal. My goal with it was to illustrate how safety could be preserved across multiple buck-passes if models are not egregiously misaligned.

Like I said at the start of my comment: "I'll describe [a proposal] in much more concreteness and specificity than I think is necessary because I suspect the concreteness is helpful for finding points of disagreement."

I don't actually expect safety will scale efficiently via the iterated amplification approach I described. The iterated amplification ...

That's a much more useful answer, actually. So let's bring it back to Eliezer's original question:

...Can you tl;dr how you go from "humans cannot tell which alignment arguments are good or bad" to "we justifiably trust the AI to report honest good alignment takes"? Like, not with a very large diagram full of complicated parts such that it's hard to spot where you've messed up. Just whatever simple principle you think lets you bypass GIGO.

[...]

Broadly speaking, the standard ML paradigm lets you bootstrap somewhat from "I can verify whether this pro

Yeah that's fair. Currently I merge "behavioral tests" into the alignment argument, but that's a bit clunky and I prob should have just made the carving:

1. looks good in behavioral tests

2. is still going to generalize to the deferred task

But my guess is we agree on the object level here and there's a terminology mismatch. obv the models have to actually behave in a manner that is at least as safe as human experts in addition to also displaying comparable capabilities on all safety-related dimensions.

Because "nice" is a fuzzy word into which we've stuffed a bunch of different skills, even though having some of the skills doesn't mean you have all of the skills.

Developers separately need to justify models are as skilled as top human experts

I also would not say "reasoning about novel moral problems" is a skill (because of the is ought distinction)

> An AI can be nicer than any human on the training distribution, and yet still do moral reasoning about some novel problems in a way that we dislike

The agents don't need to do reasoning about novel moral pro...

I also would not say "reasoning about novel moral problems" is a skill (because of the is ought distinction)

It's a skill the same way "being a good umpire for baseball" takes skills, despite baseball being a social construct.[1]

I mean, if you don't want to use the word "skill," and instead use the phrase "computationally non-trivial task we want to teach the AI," that's fine. But don't make the mistake of thinking that because of the is-ought problem there isn't anything we want to teach future AI about moral decision-making. Like, clearly we want to...

I do not think that the initial humans at the start of the chain can "control" the Eliezers doing thousands of years of work in this manner (if you use control to mean "restrict the options of an AI system in such a way that it is incapable of acting in an unsafe manner")

That's because each step in the chain requires trust.

For N-month Eliezer to scale to 4N-month Eliezer, it first controls 2N-month Eliezer while it does 2 month tasks, but it trusts 2-Month Eliezer to create a 4N-month Eliezer.

So the control property is not maintained. But my argument is th...

I'm sympathetic to this reaction.

I just don't actually think many people agree that it's the core of the problem, so I figured it was worth establishing this (and I think there are some other supplementary approaches like automated control and incentives that are worth throwing into the mix) before digging into the 'how do we avoid alignment faking' question

agree that it's tricky iteration and requires careful thinking about what might be going on and paranoia.

I'll share you on the post I'm writing about this before I publish. I'd guess this discussion will be more productive when I've finished it (there are some questions I want to think through regarding this and my framings aren't very crisp yet).

Seems good!

FWIW, at least in my mind this is in some sense approximately the only and central core of the alignment problem, and so having it left unaddressed feels confusing. It feels a bit like making a post about how to make a nuclear reactor where you happen to not say anything about how to prevent the uranium from going critical, but you did spend a lot of words about the how to make the cooling towers and the color of the bikeshed next door and how to translate the hot steam into energy.

Like, it's fine, and I think it's not crazy to think there are other hard parts, but it felt quite confusing to me.

I would not be surprised if the Eliezer simulators do go dangerous by default as you say.

But this is something we can study and work to avoid (which is what I view to be my main job)

My point is just that preventing the early Eliezers from "going dangerous" (by which I mean from "faking alignment") is the bulk of the problem humans need address (and insofar as we succeed, the hope is that future Eliezer sims will prevent their Eliezer successors from going dangerous too)

I'll discuss why I'm optimistic about the tractability of this problem in future posts.

I think that if you agree "3-month Eliezer is scheming the first time" is the main problem, then that's all I was trying to justify in the comment above.

I don't know how hard it is to train 3-month Eliezer not to scheme, but here is a general methodology one might purse to approach this problem.

The methodology looks like "do science to figure out when alignment faking happens and does not happen, and work your ways toward training recipes that don't produce alignment faking."

For example, you could use detection tool A to gain evidence about whether t...

To the extent the tool just gets gamed, you can iterate until you find detection tools that are more robust (or find ways of training against detection tools that don't game them so hard).

How do you iterate? You mostly won't know whether you just trained away your signal, or actually made progress. The inability to iterate is kind of the whole central difficulty of this problem.

(To be clear, I do think there are some methods of iteration, but it's a very tricky kind of iteration where you need constant paranoia about whether you are fooling yourself, and that makes it very different from other kinds of scientific iteration)

Sure, I'll try.

I agree that you want AI agents to arrive at opinions that are more insightful and informed than your own. In particular, you want AI agents to arrive at conclusions that are at least as good as the best humans would if given lots of time to think and do work. So your AI agents need to ultimately generalize from some weak training signal you provide to much stronger behavior. As you say, the garbage-in-garbage-out approach of "train models to tell me what I want to hear" won't get you this.

Here's an alternative approach. I'll describe it in ...

The 3 month Eliezer sim might spin up many copies of other 3 month Eliezer sims, which together produce outputs that a 6-month Eliezer sim might produce.

This seems very blatantly not viable-in-general, in both theory and practice.

On the theory side: there are plenty of computations which cannot be significantly accelerated via parallelism with less-than-exponential resources. (If we do have exponential resources, then all binary circuits can be reduced to depth 2, but in the real world we do not and will not have exponential resources.) Serial computation ...

This thought might be detectable. Now the problem of scaling safety becomes a problem of detecting [...] this kind of conditional, deceptive reasoning.

What do you do when you detect this reasoning? This feels like the part where all plans I ever encounter fail.

Yes, you will probably see early instrumentally convergent thinking. We have already observed a bunch of that. Do you train against it? I think that's unlikely to get rid of it. I think at this point the natural answer is "yes, your systems are scheming against you, so you gotta stop, because w...

• It's possible that we might manage to completely automate the more objective components of research without managing to completely automate the more subjective components of research. That said, we likely want to train wise AI advisors to help us with the more subjective components even if we can't defer to them.

Agree, I expect the handoff to AI agents to be somewhat incremental (AI is like an intern, a new engineer, a research manager, and eventually, a CRO)

which feels to me like it implies it's easy to get medium-scalable safety cases that get you acceptable levels of risks by using only one or two good layers of security

I agree there's a communication issue here. Based on what you described, I'm not sure if we disagree.

> (maybe 0.3 bits to 1 bit)

I'm glad we are talking bits. My intuitions here are pretty different. e.g. I think you can get 2-3 bits from testbeds. I'd be keen to discuss standards of evidence etc in person sometime.

Thanks, this is good feedback!

Addressing the disagreements:

The level of practicality you assign to some approaches is just insanely high. Neither modeling generalization nor externalized reasoning, and certainly not testbeds seem to "strongly practical" (defined as "Applies to arguments that do not require fundamental research advances, but might require"). The only one that is justifiably "strongly practical" to me is "absence of precursor abilities."

Externalized reasoning: just a capability argument, no?

Testbeds: I think the best testbed ideas rn just re...

Thanks for writing this. I think it does a good job laying out the assumptions needed for control.

It seems like it could be challenging to justify that a red team is competitive -- especially if you are aiming to extract massive amounts of work from AI systems.

For example, if your AI system is like a human sw engineer but 30X faster, then it might come up with effectively superhuman plans just because it has lots of time to think. Externalized reasoning can help, but it's not very clear to me that externalized reasoning can scale to domains where AI ...

(opinions are my own)

I think this is a good review. Some points that resonated with me:

1. "The concepts of systemic safety, monitoring, robustness, and alignment seem rather fuzzy." I don't think the difference between objective and capabilities robustness is discussed but this distinction seems important. Also, I agree that Truthful AI could easily go into monitoring.

2. "Lack of concrete threat models." At the beginning of the course, there are a few broad arguments for why AI might be dangerous but not a lot of concrete failure modes. Adding more failure...

PAIS #5 might be helpful here. It explains how a variety of empirical directions are related to X-Risk and probably includes many of the ones that academics are working on.

This is because longer runs will be outcompeted by runs that start later and therefore use better hardware and better algorithms.

Wouldn't companies port their partially-trained models to new hardware? I guess the assumption here is that when more compute is available, actors will want to train larger models. I don't think this is obviously true because:

1. Data may be the bigger bottleneck. There was some discussion of this here. Making models larger doesn't help very much after a certain point compared with training them with more data.

2. If training runs ...

Safety and value alignment are generally toxic words, currently. Safety is becoming more normalized due to its associations with uncertainty, adversarial robustness, and reliability, which are thought respectable. Discussions of superintelligence are often derided as “not serious”, “not grounded,” or “science fiction.”

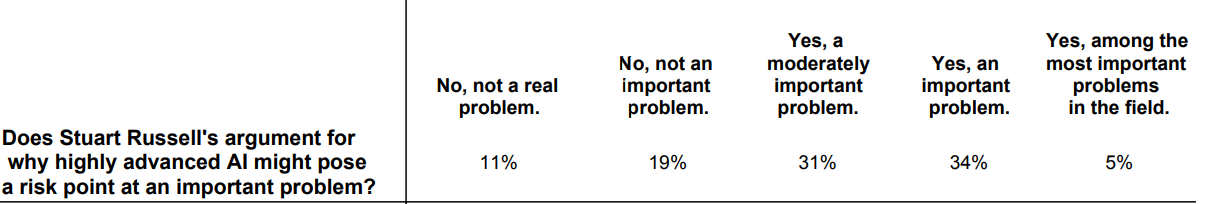

Here's a relevant question in the 2016 survey of AI researchers:

These numbers seem to conflict with what you said but maybe I'm misinterpreting you. If there is a conflict here, do you think that if this survey was done again, the...

You don't seem to have mentioned the alignment target "follow the common sense interpretation of a system prompt" which seems like the most sensible definition of alignment to me (its alignment to a message, not to a person etc). Then you can say whatever the heck you want in that prompt, including how you would like the AI to be corrigible.