(I wrote this in reply to a draft; apologies if the post has been load-bearingly updated since then.)

Consider a future AGI. The best argument I currently know of for why "general intelligence" is a thing at all (as opposed to all intelligence just boiling down to a bag of use-case-specific heuristics) is that search/optimization/world-modeling/planning/etc are naturally recursive; problems factor into subproblems, goals factor into subgoals. So, a natural general-purpose architecture for intelligence involves a "general intelligence module" which can take in a (sub)problem, and recursively pass (sub)subproblems to other instances of the general intelligence module.

Let's assume that general intelligence either does look vaguely like that, or at least can look vaguely like that. (This would be a potentially useful assumption to disagree with!)

Assuming that structure, consider how the different instances of the general intelligence module relate to each other. One module might be able to more efficiently achieve its own subgoal A by hacking/manipulating/overwriting another module's subgoal B; then there would be two modules working toward subgoal A. But a "general intelligence" made of such modules would not be very generally intelligent; its modules would overwrite each others' subgoals all the time, ruining the system's ability to actually search/optimize/model/plan/etc on complicated problems.

So in order for a general intelligence built out of recursive subproblem-solver instances to work... those subproblem-solver instances need to have some kind of corrigibility constraint to their interactions. They need to somehow not try to hack each other, manipulate each other, etc.

I don't know how a powerful general intelligence would handle that. I'm not sure I know how humans handle it, within our own minds, though maybe it's an extension of the ideas in your post. But I do expect that strong AGI is possible and will solve this problem somehow, and that solution will involve some kind of corrigibility/nonmanipulation between the AGI's components. More speculatively, I would guess that there is a natural convergent way to handle this problem, possibly even one which humans accidentally use already (though we clearly do not have a proper verbal or mathematical understanding of it).

The above is a frustratingly non-constructive argument; it points toward a convergent notion of corrigibility without really saying concrete things about what that notion might be. But it's the best argument I currently know of that there's a True Name for corrigibility/nonmanipulation/etc.

I interpret you as saying:

“Let’s say my wool sweater is wet but I want to wear it outside when I leave in 10 minutes. So I have a goal (look nice tonight) and a subgoal (dry my sweater within 10 minutes). Some “module” in my brain is focused on the subgoal, and one thing that could happen is: it finds that there’s really only one way to accomplish the subgoal, and it’s to put the sweater in the dryer on the highest possible heat. It then notices that this will be shrink the sweater to look terrible, which is a problem according to the “look nice tonight” goal, so the “module” “solves” that problem by simply deleting the “look nice tonight” goal! …This scenario doesn’t actually happen, so that calls for an explanation of why not, and that explanation (whatever it is) will be a solution to corrigibility.”

Assuming that’s what you meant: I think the reason the scenario doesn’t happen (in humans) is because “modules” is not as literal a thing as you make it out to be. A better analogy is, like, looking at a big painting. You can’t take in the whole thing at once (among other things, your vision is only sharp at the fovea), so you focus on one part, and then another part, etc. But whatever you’re focusing on right now, you’re interpreting it in the context of the rest of the painting. That larger context never goes away; it remains active in your mind. And there’s some constraint-satisfaction thing that will ensure that the part of the painting you’re focusing on is being interpreted in a way that’s consistent with that larger context. Or if an inconsistency arises, your attention will be drawn to it—it will jump out with a feeling of confusion.

By the same token, when you’re making a plan, you will be (at some points in serial time) be focusing on the drying-the-sweater problem, but you’ll be thinking about that problem in the context of the larger plan that involves looking nice tonight. Looking nice tonight remains at the back of your mind the whole time, throwing out demands into the constraint-satisfaction soup. And the idea of looking nice tonight is the only thing you ACTUALLY care about, in terms of what the valence (a.k.a. value function, a.k.a. critic) will upvote versus downvote, so that part will win when deciding tradeoffs.

Anyway, does this suggest a solution to AI corrigibility? Well, kinda. But it’s just the obvious one: the AI keeps the supervisor’s desires / preferences / aversions at the back of its mind, and notices when it has an idea that would bother or offend the supervisor, and then not do it. I do talk about that briefly in §4.2, which cites my previous post “Act-based approval-directed agents”, for IDA skeptics.

The sweater example is close but doesn't quite hit the nail on the head. It's not that the (dry sweater) planner would delete the (look nice) goal; that wouldn't help dry the sweater! Rather, the (dry sweater) planner would try to commandeer more mental resources, i.e. more planner-modules, steer more attention to drying the sweater. That additional attention potentially helps dry the sweater. But as an accidental side-effect, attention would be steered away from other goals, like e.g. (look nice). In short, the (dry sweater) planner-module can better dry the sweater by redirecting the (look nice) module to focus on drying the sweater instead.

... and that totally does happen in humans! Humans often get caught up in a specific subgoal, lose track of the broader goal which generated that subgoal in the first place, and end up optimizing for the subgoal in a way which doesn't help the original goal. It's the phenomenon of lost purposes, at an individual level.

(Likewise with the painting example: when looking at little patches of a large complex painting, people will totally lose track of context and overlook inconsistencies.)

It really doesn't seem like humans "keep their eye on the ball" all the time, even in the large majority of day-to-day cases where cognition basically works.

Thanks!

We can divide things into an inference algorithm (what to do now) and a learning algorithm (how to change stored parameters such that I do better in the future). These correspond respectively to search / planning / foresight, and to RL / updating-from-mistakes-and-surprises. In humans, generally both the inference algorithm and learning algorithm are working together throughout life. (Although in the ASI context, as intelligence and knowledge goes up, we expect foresight to get better, and thus fewer mistakes and surprises, and thus the balance tilts towards the inference algorithm over the learning algorithm.)

So yeah, sure, people will sometimes pursue a subgoal while losing track of the actual goal, because the inference algorithm is imperfect. But then the person would later on notice that they failed to achieve their actual goal, and see that as a bad thing, and then the learning algorithm will kick in and help them avoid a similar mistake next time.

This system is not perfect, but we generally get by, especially in familiar situations.

I still don’t think there’s any lesson here for AI corrigibility, except what I said before: “the AI keeps the supervisor’s desires / preferences / aversions at the back of its mind, and notices when it has an idea that would bother or offend the supervisor, and then not do it”. I guess that sentence was only discussing the inference-algorithm part, and I omitted the corresponding learning-algorithm part, which would be: “…and also, the AI notices if it violates the supervisor’s desires / preferences / aversions despite its intentions, and when that happens, the AI feels bad and thinks about how to do better next time”.

This (the inference-algorithm part and learning-algorithm part together) comprises an approach to corrigibility that I think many people treat as the obvious default plan.

One sufficient property is the "not acting in the real world" subtype of corrigibility- the subproblem-solver needs to not solve the real-world subproblem that could be better-solved by overthrowing the mental hierarchy, it's given a transformed alternate-world problem that can be mapped back onto a solution to the real-world subproblem when it's done. E.g. I need food, so I plan a trip to the store, but as a problem specified within a trip-planning-appropriate ontology that has actions like "turn left at the light" but not actions like "brainwash myself to want only going to the store."

Is this too unsatisfying?

But I think humans probably do it by not cleanly recursing, and occasionally checking in with various heuristics for the superproblem.

My understanding is that ARC Theory has thought some about this topic and has proposals for avoiding manipulation. I think their only public output on this is in an appendix of the ELK report 'Avoiding subtle manipulation'. I recommend reading '“Narrow” elicitation and why it might be sufficient' and 'Indirect normativity: defining a utility function' first for context.

I think @Lukas Finnveden and maybe @Eric Neyman thought about this more after this point. (My recollection is that at least @Lukas Finnveden updated toward thinking the problem was harder and that the sketch in the ELK appendix wouldn't be sufficient.)

My (low confidence) understanding of the proposal is something like:

"The AI takes an action A if and only if {long-term future-self if the AI takes action a | a in the action space} on aggregate like A"

where "long-term future self" is defined by some recursive process where you locally choose what entity counts as your near-term future self (it can be some other entity that you trust more - e.g. a future aligned AI), where these future selves all have access to an AI that honestly answers questions that are already meaningful to the human when the right answer would be unambiguous to someone who understood what was happening in the world, and where "on aggregate" is defined by whatever process you'd feel comfortable with when choosing how to delegate decisions over a set of future selves with different histories (e.g. it's fine to ignore future selves with crazy beliefs or that went through processes that are unambiguously not fine). (More precisely, the aggregation is local and can change over time, I wrote it as a single aggregation over the long-term future selves for simplicity.)

Worked examples:

- In the "super persuasive paperclip ad" from the ELK report, most of your future selves can ask the AI what the world would look like in the branch where you saw the ad, this is the sort of question where the AI is honest so it tells them that it is full of paperclips, almost all of your future selves hate it, thus the AI does not choose to show you the super persuasive paperclip ad

- If the AI has a big list of experiences that it can subject you to that would update your relative moral weight for cats vs dogs in ways the AI can anticipate and such that there is some path dependence that makes later experiences less effective such that there is no convergence if you just present all of them, and it has to choose which experiences to subject you to and in which order, then the AI doesn't have much leeway in how much to push you in the direction of cats vs dogs, and where you end up will be determined by some kind of prior over the weight of each experience and your aggregation metric. For example:

- If each experience updates you by +1/2^t or -1/2^t, and there is a symmetry in the experiences that makes the prior uniform over experiences, then the set of future selves are uniformly distributed over [current - 1, current +1], and so for most reasonable aggregation metrics you will stay at your current position

- If there is one big experience that updates you towards cats by 1, and no experience that updates you towards dogs, then it depends a lot on your prior over actions.

- One natural choice would be equal weight on "get the big experience eventually" vs "never get the big experience", which would probably result in a 0.5 update.

- But maybe creating the experience is very complex and weird, such that it would have a smaller prior

- Or maybe shielding you from the experience is very complex and weird, such that it would have a bigger prior

(The report acknowledges that there are many complications, some of which are difficulties with having a reasonable prior over actions and having a good aggregation function. I'd guess that the proposal is quite cursed and gets you garbage outcomes in the limit of very superintelligent minds if you only ever deferred to future humans, but that this is fine in practice because your "future self" can be an actually aligned AI and this happens before you hit levels of superintelligence where this proposal completely breaks down.)

Taking a step back, my understanding is that this proposal replaces the "free will" of the human by the "free will" of the agent choosing an action, which seems somewhat elegant to me. You are empowered not if you can "choose" what the agent does (which doesn't work because you are not well described as an agent in that situation), but if the agent takes actions that you somewhat robustly like (even as you learn to understand more and even in worlds where the agent took different actions).

It's a bit weird because "from the inside" this world looks like you are less empowered than in the worlds where "The AI takes an action A if and only if [long-term future-self if the AI takes action A] likes A", but it probably makes sense.

I think you're correctly identifying important issues and cracks in the standard ontology, but I think you're throwing out too much baby in an effort to get rid of bathwater.

For example, I do not think it's obvious that "Just like vitalistic force, 'wanting' is conceptualized as being acausal, i.e. an intrinsic property of an entity with no upstream cause." In control theory, we can say that a system controls for a thing based on a small collection of mathematical relationships -- pressuring an error signal towards zero. While the concept of wanting is overloaded and more complex, I think it makes sense to recognize that "X is controlling for Y" is a valid underpinning that has no vitalistic magic. We can ask what led X to control for Y, or how X controls for Y in terms that are closer to the underlying physics; there's nothing acausal or intrinsic (except for the definitions, I suppose).

It’s possible for an intuitive human concept X to be a bundle of connotations that don’t add up to anything coherent, while ALSO there’s some similar concept Y that is mathematically well-defined and is capturing many (but not all) of those connotations. Then we can argue about whether X is “really” an imperfect pointer to Y, versus whether X and Y are different but related. But that’s a pointless argument with no answer.

(Fun example: Dan Dennett wrote a book advocating for free will compatibilism in 1984, and then in 2015 added a new preface saying: well actually on second thought, maybe I should have just said all along that we should abandon the term “free will” altogether.)

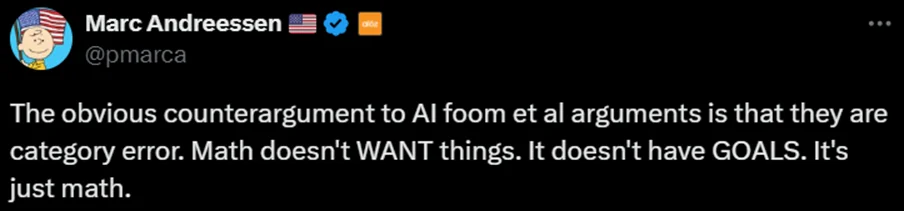

Anyway, I stand by my claim that acausality is an aspect of how most people intuitively think about wanting. Here’s an example … it’s possible that this tweet is bad-faith, but regardless, I think Marc wouldn’t have said it if it didn’t have some intuitive appeal:

So anyway, we can say that our intuitions around “wanting” have that incoherent aspect (per §2), and we can simultaneously ALSO say that there are well-defined notions of optimization (e.g. in §3.4 I cite Alex Flint’s) that overlap many aspects of the “wanting” intuition, just like you say. Those aren’t contradictory. That’s the whole thing I was trying to do in §3–§4.

Fair enough. And I certainly agree that there is a lot of bathwater! The bundle of connotations attached to the word "wanting" is a mess. I just want to flag that it seems to me that much of the normal ontology can be rescued, albeit with a little bit of work. I claim that concepts like corrigibility are still useful and coherent once the rescuing has taken place.

Yet another example is @abramdemski’s “Vingean agency” (2022) (following earlier work by Yudkowsky). He starts from a place very close to the human intuitions in §2 above, i.e. “agency” is when you can predict the outcome but not the actions leading to those outcomes. Then he hints at an intriguing idea that maybe we can just make that formal! I.e., maybe I was too quick to dismiss that kind of thing above using terms like “messed-up ontology”. (As Abram writes: “I also think it's possible that Vingean agency can be extended to be ‘the’ definition of agency, if we think that agency is just Vingean agency from some perspective….”)

By analogy, to borrow an example from @johnswentworth, thermodynamics concepts like “temperature” are tied to imperfect modeling ability (since an omniscient observer would instead track the velocity of every particle). So why can’t “agency” be tied to imperfect modeling ability too?

But alas, even if we can rigorously define Vingean agency, I don’t think it would really help with the problem I want it to solve here, i.e. pinning down a distinction between good “counsel” vs bad “manipulation”. Vingean agency seems to solve the problem of identifying an agent trying to do something, by noticing easier-to-predict ends happening by harder-to-predict means. But the “manipulation” concept worries about the possibility of intervention upstream of a person’s ego-syntonic desires. If the AI can brainwash me into deeply wanting to maximize paperclips, and then I execute a clever plan to maximize paperclips, then I would still be a Vingean agent, as long as my clever plan was sufficiently clever (from some perspective). So the brainwashing would strip me of my intuitive agency, but not my Vingean agency.

I don't think I've done enough work to boldly state that I can solve your problem, but I do think you seem overly pessimistic here. The most current attempt to communicate the idea is in my post Legitimate Deliberation.

Roughly, Vingean Agency can be identified via modified variants of van Fraassen's reflection principle (roughly: I don't know what move the chess grandmaster will make, but I do know that if I were trying to win a so-far identical game, I'd copy the grandmaster's moves given the option). This only ever makes sense from a computationally bounded perspective; agents (conceived thusly) vanish in the limit of cognition.

van Fraassen invented this principle not to study the epistemic state of a novice thinking about a grandmaster, but rather, to study our own regard for our future opinions. Our preferences can change, but (ideally) we consider those changes to be correct; not in the sense that our new opinions must be correct, nor even in the sense that our new opinions are necessarily revised in the correct direction; but in the sense of van Fraassen's reflection principle.

The literature was quick to point out that there are exceptions to the principle: we expect to forget many details of the day, such as what we had for lunch, how many times we went to the bathroom, etc as time goes on. We can expect our future opinions to be less accurate rather than more for such examples. Other examples include getting drunk, being gaslit, etc.

I use the terms "legitimate" vs "illegitimate" to describe opinion-revision processes that do and do not respect van Fraassen's reflection principle. This is just a definition of convenient terminology, not a deep account; what makes something legitimate vs illegitimate is still a big question. I expect the answer is complex in the same way that human value is complex.

Still, I think "legitimacy" provides a better handle on what the AI is supposed to be avoiding than "manipulation" -- many humans do not want to experience heroin, even though they'd predictably enjoy it and want more, because they do not consider that change a legitimate one. Similarly, humans would not want to talk to manipulative AI.

I have recently been thinking about how to construct ML training based on this design goal. Suppose you have a slow but trustworthy belief-revision process, and a fast, highly capable but untrusted belief-revision process -- which is trustworthy on problems where it gets good feedback. It seems potentially possible to create a fast-and-trustworthy process by asking the fast process to predict the slow process. (This fits the basic picture of alignment as uploading with more steps). One advantage of this approach is that we don't need to be able to give gold-standard feedback on any object-level questions; the AI is instead trained on our opinions and how they shift over time. The result is supposed to avoid human manipulation for the same reasons that (some) humans avoid hard drugs: because manipulation would violate the legitimacy of the feedback process. It is corrigible in the (weak) sense that, so long as it expects human feedback to be legitimate, cutting that feedback off would be negative-ev (viewed as lost information). Either it has already anticipated the belief change and updated accordingly (so there's no reason to block modifications the humans want to implement) or it wants the information and so won't block the update (because it trusts the humans) or it deems the human feedback illegitimate (which can either be because it is, or can be a mistake; in either case we've messed up the training process).

I think this problem is real, and it's not solvable in the limit. But might be fairly easily solvable to a fairly satisfactory degree. Drawing the line between manipulation and help requires a judgment call. But that call can be made by humans. This should give decent results. We won't get the future we "most want" by whatever criteria, but we can get a future we like an awful lot, and are pretty satisfied with both in anticipation from our current criteria, and by our ultimate criteria.

I agree with the core argument and problem statement. To restate it briefly: there's no sharp line between manipulation and giving helpful information. This is a necessary result of humans not having well-defined goals, values, or preferences over the long term. All of those change based on circumstances, and decisions and learning along a particular path. We can't clearly distinguish manipulation, tricking me into doing what you want, from helpful information, getting me to do what I want, because what I want isn't defined.

I also agree that none of the approaches you mention provide a crisp solution or establish what human desires "really" are. They are contingent and path-dependent. One could propose summing over all possible or all desirable paths, but that would be very loose and require judgment calls.

While the problem doesn't seem to have a crisp solution, I think existing alignment approaches probably solve it adequately - although avoiding manipulation does add some extra difficulty.

If you've got a value-aligned ASI, its values need to include not manipulating its humans by their own judgment. That's a nontrivial addition on top of otherwise wanting to do what they want, another sense in which you've got to get "do what they want" exactly right to really succeed. You do address this and I share your worry that this isn't necessarily adequate. But if it "wants" to not manipulate them but then winds up doing it anyway, how superintelligent was it? Of course, if it sort-of wants to avoid manipulation and also sort-of wants a future full of pretty pink ponies, the result might be a future with a little manipulation and a lot of pink ponies. Balancing all of those desires, including deontoligical desires like "don't manipulate" will be tricky.

I think some amount of actual feedback from the humans involved could clarify "what they want" even with a value-aligned ASI if you incorporate some degree of the instruction-following or corrigibility approach.

For an instruction-following or corrigible AI, more of the challenge falls on the user(s)/principal(s). You tell your intent-aligned ASI not to manipulate you in ways you'd consider manipulation, and to tell you about any edge cases, so you can weigh in and clarify its conception of what you'd consider manipulation.

Neither of those come for free, and neither solves the problem perfectly. But I think they're decent solutions.

You tell your intent-aligned ASI not to manipulate you in ways you'd consider manipulation

I don't want my ASI to interact with me in whatever way maximizes pretty pink ponies but that I-inside-the-thought-experiment wouldn't consider manipulation. I-outside-the-thought-experiment expect this would lead to severe manipulation, even though I-inside-the-thought-experiment wouldn't agree!

Well, I don't want that either, but I think it's an acceptable level of not-getting-exactly-what-we-want. It seems like it would still be something among the many things you want.

You're saying it will find methods that you wouldn't consider manipulation, but that will work just fine to convince you you want bunches of ponies? I think that means you're fine with ponies; it's just one of the many futures you'd like a lot.

Do you think it could manipulate you into things that you-now would find repugnant, while never manipulating you by your standards? That seems contradictory. If my ASI says "You asked my to tell you anything I'm doing that you might consider manipulative. Here's the biggest one. I like pink ponies a lot, so I'm going to keep presenting possible futures in ways that emphasize how awesome pink ponies are. I think you'll ultimately agree with me." You could either say "okay fine that doesn't seem like manipulation" or "don't do that, that's manipulative!"

And you'll get more chances to veto. If it never lies to you when asked, it seems like you've got the means to steer away from futures you don't like in any sense, if you bother.

Substitute hedonium for pink ponies. If an honest and corrigible AGI talks you into preferring a hedonium future, it seems like you actually like hedonium outcomes a lot.

If it doesn't tell you the truth about what it's doing, it's misaligned in a more fundamental way than just having some minor preferences that don't align perfectly with yours.

This might become a bit of an argument for corribible vs. value-aligned AGI. If it's aligned to what-you-value-in-the-future that gives it almost unlimited leeway to force you into that outcome. If you add a deontological stricture against manipulation, that might not solve the problem at all. This is Steve's point. My argument is that a corrigibility or instruction-following alignment target does solve the problem adequately if not perfectly, if your power over the ASI is used even minimally wisely.

Are you thinking that an ASI that's corrigible will figure out how to manipulate you into not giving it instructions to warn you of its manipulation?

My sense is that if it's more motivated to follow instructions than to make ponies or hedonium, that and your actual desires win out.

Do you think it could manipulate you into things that you-now would find repugnant, while never manipulating you by your standards? That seems contradictory. If my ASI says "You asked my to tell you anything I'm doing that you might consider manipulative. Here's the biggest one. I like pink ponies a lot, so I'm going to keep presenting possible futures in ways that emphasize how awesome pink ponies are. I think you'll ultimately agree with me." You could either say "okay fine that doesn't seem like manipulation" or "don't do that, that's manipulative!"

What I'd really do is turn this AI off because I didn't think it was safe. Which, like, good job to the hypothetical interpretability / honesty / corrigibility / contingency systems work that puts me in that hypothetical situation. But as you mention, maybe the AI could avoid getting here in the first place. (And even if I turn it off, that's cold comfort if someone else makes the other choice a few days later.)

I think manipulating me into not asking the question that leads to me shutting it off is definitely a strong choice that leads to more pink ponies. Or manipulating the situation so that it's not me who's asking the question, it's someone else. It can also modify what the honest answer to questions about its own behavior are by precommitting - it could deliberately choose the answer to be something less concerning, if that led to more pink ponies. I'm also pretty concerned that the standards for what counts as "honesty" will allow for strategy.

(Isn't it paradoxical to manipulate me into not asking the question if it's not supposed to make some fixed manipulation-detector fire? No, it just has to simultaneously optimize against both my behavior and the firing of the manipulation-detector, and it's the result of this optimization that I'm saying I expect to be manipulative according to me-outside-the-thought-experiment. There are probably more sophisticated (albeit currently unknown) ways of incorporating my standards into the AI's decision-making that wouldn't be so vulnerable, and if we figure them out I hope we use them to solve value learning.)

Partially I commented with this inside-the-thought-experiment versus outside-the-thought-experiment disconnect because I think it's interesting in general. It's kind of like Gödel sentences, or the vibrating record players from G.E.B. - me-inside-the-thought-experiment is a complicated enough system that he can be nudged in all sorts of ways if you understand him, and this property is hard to patch out. But the other part is the real-world case of replacing "pink ponies" with "human giving positive feedback signal".

Clever AI we build to "just follows instructions" will on the current paradigm probably have some consequentialist desires about positive feedback signals and various correlates. As you can tell I'm pretty pessimistic that this would end in bad stuff.

To me the most promising solution is to get the AI to not optimize for influencing people's beliefs (3.4) except in certain permitted (often myopic) ways that depend on the situation. Some candidate guidelines:

- Early on, helping AI companies understand risks seems crucial and allowed.

- When they help with development of the model spec, they can help people understand relevant considerations to the model spec, but should do so myopically and should not consider consequences downstream of those people's beliefs (e.g., on the model spec, on the people's actions). This has downsides in terms of slop like you say, but: (1) I think myopically influencing people's beliefs on requested questions does a huge amount to help and (2) I think it could also be permissible to proactively take actions based on downstream consequences sometimes if this is done openly and without much optimization pressure, ideally making arguments a human could understand (e.g., rather than just framing its discussion in a certain way to achieve a different model spec, it should instead leave a note explaining why it worries the default interpretation might lead to a suboptimal model spec).

- Later on, during reflection, this might look like AIs only being allowed to myopically provide guidance on (some) descriptive claims (aiming for something similar to 3.1). I share worries about reflection being high-variance and underspecified, but I think this is a somewhat fundamental limit on value idealization that doesn't have much to do with AI.

I also share worries that consequentialist goals can erode all of these guidelines, but this is a somewhat separate concern (separating deliberation and competition seems good here).

That suggests an approach where AIs simply learn to make a human-like gestalt assessment of what’s good vs bad (according to humans in general, and according to this particular human specifically), and then do the good things but not the bad things. Not manipulating people would, we imagine, naturally come along for the ride.

If we do something like that, I wouldn’t think of it as a solution to the True Name thing, but rather giving up on the True Name thing in favor of a different approach entirely, one relying more directly on (real or simulated) human judgment. See e.g. “Act-based approval-directed agents”, for IDA skeptics.

This kind of approach will probably sound like the very obvious solution for readers who work on LLMs. No comment on LLMs, but for the problem I’m working on (brain-like AGI), it just brings me right back to where I started in §1.2: if we’re learning what’s good by the gestalt of human judgment and culture, and if human judgment and culture can themselves be gradually shifted over time, then this might not be an adequate bulwark against the AGI’s consequentialist desires.

I'm certainly in favor of doing good things and not bad things.

I think it's okay for non-manipulation to be some nearly alignment-complete thing you need to make corrigibility work (which itself is, imo, "the alignment-complete-and-then-some thing you need to make trial and error reliably work"), or to make RL on human feedback work (along with the rest of eliciting latent knowledge). But yeah, if by "True Name thing" you mean the hope that non-manipulation wasn't going to be very alignment-complete at all, then oh well.

I think the way you put non-manipulation on par with consequentialist desires is to think in terms of evaluating future trajectories (evaluating futures using macrostates that simplify across time might be equally good?). This makes certain sorts of mistakes (like evaluating modeled future non-manipulation by calling the modeled future state of human culture) harder to make. There's still the "moon following you while you work at NASA" problem where you don't want the AI to evaluate the non-manipulation content of a trajectory in a sort of high-level way while using a more fine-grained method to evaluate the achievement of some consequentialist goals. And since there are computational advantages to planning step by step rather than imagining the entire future of the universe, there's the problem of doing that translation without privileging one part of your motivational system over another (seems hard, might be worth a toy model).

if we’re learning what’s good by the gestalt of human judgment and culture, and if human judgment and culture can themselves be gradually shifted over time, then this might not be an adequate bulwark against the AGI’s consequentialist desires.

…

I think the way you put non-manipulation on par with consequentialist desires is to think in terms of evaluating future trajectories

Yeah, exactly, when my shoulder-optimist argues his case, he brings up the idea that the virtue-ethics-y side of the AI would maybe notice that the AI is engaging in a systematic pattern of behavior that has the effect of gradually shifting human judgment and culture over time, and it would see that as bad, and it would vote against behaving in that way. But it also might not. That’s why I said (in §1.2 and §4.2) that I was unsure about how bad a problem this is. I’m kinda stuck on that right now, and not sure how to proceed, except to try to engineer some different solution that’s easier for me to reason about.

I think this is overly committed to the hypothesis that the various concepts intuitively necessarily route through an imagined free will.

Take non-manipulation. I don't think I need to invoke non-causal free will, or even free will at all to describe that. In the deceptive case: H has some preferences. A has some preferences. A communicates falsehoods or partial truths which (predictably) cause H to have incorrect beliefs. H acts on those beliefs (in a way which furthers A's preferences).

Now, this also has the effect of making the activity in the world steer more for A's preferences than H's, at least locally. If you have an ontology where preferences arise from non-causal free will or whatever, then you might also say that A's free will subdued H's in that exchange, I guess.

Am I correctly engaging with what you're describing here?

I think that any particular example might not involve explicitly invoking free will, but it’s kinda the water we’re swimming in, and over a lifetime of thinking in those terms you wind up with a soup of connotations and associations that are kinda dovetailing with a free will worldview even if they’re not explicitly about that.

Regardless, you ask whether this post is “overly committed” to that hypothesis. I hope it’s not! I tried to cover all the other connotations of “manipulation” that I could think of in §3–§4. Thus, your particular example with “H” and “A” seems to mostly lean on §3.4 (A is optimizing for H’s future preferences) and §4.2 (an observer sympathetic to H would see the interaction as bad).

(It’s possible that I structured the post in a way that over-emphasized the free will thing, but I do think the free will thing is tricky and important and hence deserves disproportionate space in the text.)

I guess in my takeaway from the post (haven't reread since yesterday), the thrust was like: non-deception, corrigibility etc probably don't have 'true names', because they're concepts fundamentally attached to a fake ontology. In particular, non-causal vitalistic free will sort of things.

But actually I appear to be able to describe deception and other things in terms which don't route through that kind of ontology, at all, and models like that are how I and many of my interlocutors discuss such things. Curiously I don't think any entries in section 3 or 4 correspond to the description I gave - agree that 3.4 is maybe closest (and on a different day I might have used a case example closer to that).

To me that refutes the paraphrased thrust above.

I grant that there's something funny with 'intentional stance' at all, where in principle one can describe any instantiated agent in terms of lower-level mechanics. So I guess one might say that ontology is mistaken (I don't think you would)? But that's just like all of physics basically. Complex systems are like that. You get emergence and have to deal with it. I hold that beliefs and intentions and preferences are worthy concepts to build with (and I think you do too).

Indeed various colloquial conceptions of agents are more mystical and incoherent. But I don't think that has bearing on whether there's a true name for the concepts discussed in more coherent terms.

I guess in my takeaway from the post (haven't reread since yesterday), the thrust was like: non-deception, corrigibility etc probably don't have 'true names', because they're concepts fundamentally attached to a fake ontology. In particular, non-causal vitalistic free will sort of things.

Not exactly … What I had in mind overall was more like: the discussion of human intuitions in §2 “sets the stage” by (A) providing some brainstorming aid and grounding as we embark on the Quest for a True Name For Manipulation in §3–§4; and §2 also “sets the stage” by (2) seeding the idea that we should at least be open to the possibility of such a True Name not existing at all, rather than it being an obvious thing, like how I know that a sock exists even if I don’t how to mathematically define it, because I have an intuitive notion of a sock and it’s all-but-certain that this intuitive notion can in principle be formalized into some real-world notion of sock-ness. Whereas for manipulation, maybe it can be construed as pointing to something real, or maybe it can’t, but I think it’s important to be in a mindset where this is not obvious, like it is for socks.

Then the alignment-relevant meat of the post is §3–§4, along with further discussion in §5.

Sorry if I wrote things up in a confusing way, but I’m not seeing an obvious way to improve it right now.

There's also a curious and troubling aspect of humans that we mingle the types of beliefs, intentions, preferences, and intrinsic vs instrumental stuff. We're also malleable which makes things like CEV potentially underdetermined. I'm not entirely sure what to do about that! Though I unironically anticipate that the right virtue orientation can help a lot with selecting 'acceptable enough' paths through that highly path-dependent space.

Another example is @jacob_cannell in Empowerment is (almost) All We Need (2022). To his credit, he raises the issue that human desires are manipulable in the section “Potential Cartesian Objections”. But then he waves this issue away in a brief sentence: “These cartesian objections are future relevant, but ultimately they don't matter much for AI safety because powerful AI systems - even those of human-level intelligence - will likely need to overcome these problems regardless.”

Well .. no - looking back on that post, the 'potential cartesian objections' are all just technical complexity of the precise mathematical definition of empowerment, comparing a few options.

I think Jacob is suggesting that the AGI will autonomously develop a robust notion of self-empowerment, including “what it means for me (the AGI) to not get manipulated”, and then it can (somehow?) transfer that notion to humans.

Not quite - yes I do think that powerful AGI will likely have a notion of self-empowerment - as it seems to just be the most natural general form of unsupervised learning objective for control/RL. Agents trained on that universal criteria - almost by definition - learn to control their environments effectively for any specific (sufficiently long term) utility function. Self-empowerment objective is to motor control, planning and causal modeling as self-supervised prediction objectives are to representation and world model learning. Its related to the free energy principle.

But that by itself just results in sociopathic dangerous AGI. Rvolution faced the same problem in a sense - self-empowerment is useful but by itself would just result in organisms that focused on controlling their world and living forever but would never care to reproduce.

Anyway - in regards to your original issue - the manipulability of human desires, I do agree it is a complexity for any alignment solution. Note that empowerment is not the absence of being manipulated. Sure it does correlate with being the manipulator of the world rather than the manipulated, but an AI optimizing for human empowerment could face scenarios where manipulating humans is just the best way to maximize their long term potential. That is hardly unique to empowerment objectives, and is a tradeoff any alignment solution will need to navigate. On a more mundane level it surfaces in parent child relationships for example.

On alternatives to brute consequentialism, I was intrigued by Grietzer's formulation of virtue as 'promote x x-ingly'. e.g. to be just is to promote justice justly, to be honest is to promote honesty honestly, ... See also. It's only a few pieces of a picture, but looked like a promising direction, to me.

I’m mildly skeptical that we can drop consequentialist preferences altogether (I mean, as first-class preferences, I’m not just talking about ‘consequentialist planning as a means-to-an-end for being helpful right now’ and similar). I don’t have any airtight proof, and I really have some uncertainty here, but FWIW I’m partly getting that from a general intuition that the people doing (and figuring out) important and novel things in the world, the kinds of things that would help with the rogue ASI problem, are people really deeply care about simulacrum level 1 rather than 3, and you only get that from having consequentialist preferences as major first-class components of the motivation system.

A different idea is that we can build top-level preferences out of a mix of consequentialist desires and non-consequentialist (e.g. virtue-ethics-y) desires. And yeah, that seems like the obvious “Plan A” to me. The question is whether it would actually work, see §1.2.

I don't understand how simulacrum levels come into it [1] . Are you taking me to mean 'virtue signalling' when I say virtue? No! Adherence to honest and earnest communication, for example, is a commonly recognised virtue.

FWIW I also have never got what is supposedly ordinal about the simulacrum levels beyond 1, the honest one. The other 'levels' just look like various orthogonal breeds of fakery, to me. Haven't scrutinised deeply. ↩︎

Oh, hmm, good point, thanks. Let me try again:

When I think of humans who get difficult things done, or figure difficult things out, they tend to care about accomplishing those things, a lot, and in a direct and explicit way, not just e.g. as a facet of what kind of person they see themselves as. I mean, maybe “what kind of person I see myself as” has something to do with how they originally came to care about those things, but it’s not what they’re explicitly thinking about. They’re thinking directly about the object-level prize at the end of the journey, and how to get that prize.

E.g. plenty of climate change activists think of climate change activism as a good and virtuous thing to do, but I think the subset of climate change activists who are really moving the needle are the ones who are directly thinking about climate change being directly bad, and really want it to stop, and are focused directly on how to make that happen.

E.g. plenty of mathematicians think of math as a good and praiseworthy activity, but I think that the person who will solve the Riemann hypothesis will be a person who is (in addition to being smart etc.) really damn curious about why the Riemann hypothesis is true, and focused directly on figuring that out. Or they’re really damn eager to become famous by solving the Riemann hypothesis, or whatever else.

It seems to me that this is a general pattern—i.e., we need direct-consequentialism not just consequentialism-incidentally-arising-from-virtue to accomplish difficult novel tasks—and my hunch is that this pattern generalizes to brain-like AGI. If so, then we will face the problem of balancing consequentialist direct top-level goals with non-consequentialist direct top-level goals, rather than merely facing the (probably easier) problem of avoiding the former altogether.

(This is all a lightly-held opinion.)

Path-dependence of values is defeated with aggregation over the possible paths that should have a say in what the values should be. Aggregation over many possibilities takes place in an updateless view from before those possibilities diverge. What kinds of possibilities should contribute to defining values is determined by values. And the possibilities should perhaps be shaped with the aid of aggregated values, to channel their counsel.

This sets up an analogy between CEV and updateless decision making, where the updateless core is working to define values, instead of dictating the joint policy for (the instances of an agent in) the possible paths of future development of a world. This updateless core still gets to do something within those paths according to the values it figured out so far, but it's also considering what's happening there to define its aggregated values further, so that the aggregated values are given by some fixpoint of this two-directional process of aggregation of values from future paths (which the values consider to be legitimate and uncorrupted sources for aggregation) and influence by values on the future paths (carefully, according to what the aggregated values have figured out so far). Alignment is then mostly a property of these hypothetical future paths (whether they retain legitimacy and will be given a bit of influence over the aggregated values), while corrigibility is mostly a property of the updateless core (with respect to some future path, whether the updateless core is going to listen to the new things that path figures out about values, to include them in aggregated values).

As in updateless decision making, the updateless core doesn't actually observe the future paths when making decisions about values (just as an updateless agent doesn't take into account its observations when making decisions about the joint policy). It determines aggregate values, and then it's the role of those values to take the concrete details of each future path into account. The updateless core can only consider any given possible future as one out of the collection of all of them, the way Solomonoff induction considers all possible programs. There are probably ways to make this more tractable, things like Monte Carlo simulations, abstract interpretation, or just straight up reasoning by any means, including mathematical reasoning and machine learning. And possible futures can't see (or be influenced or judged by) the final values the updateless core comes up with, since it's still being computed as they develop, they can only see partial preliminary values. So the possible futures are in a state of acausal interaction with the updateless core, with logical time running forward in both, defining the fixpoint of fully determined aggregate values (the CEV of these futures) concurrently with the futures themselves running forward (the actual or hypothetical living of the world, which is not primarily about defining values).

The updateless core coordinates the possible futures, the way an updateless agent coordinates its instances. And it cares about some of the in-principle possible futures and not others, the way an updateless agent only cares about some possible worlds. Its influence over the possible futures is counsel to the extent these futures are represented in the aggregate values that carry its influence. It would be manipulation if the aggregate values are sufficiently alien to a particular possible future, in which case it's possibly not a legitimate future from the point of view of the updateless core in the first place (and correspondingly, the updateless core is not corrigible to that possible future).

But alas, I currently think the most important use-case for AGI is figuring out true important things about the world (esp. related to ASI alignment and strategy) and explaining those things to the human. For this process to be effective, we cannot have an AGI that’s unconcerned with what we wind up believing after the discussion—that’s a recipe for slop-and-doom, or just an AI that’s incomprehensible and unhelpful. Rather, I want the AI to be like a disagreeable nerd that wants us to have a good understanding, notices areas where we’re confused, and is brainstorming and strategizing on how to help set us straight by improving its clarity and pedagogy. This strategizing is clearly a form of optimization, and the target of the optimization is related to the human’s eventual desires (well, it’s nominally about the human’s beliefs, but beliefs and desires are entangled)

What about if AI optimizes for humans fully understanding the evidence and arguments that it has discovered but does not optimize for what humans actually end up believing about the bottom line?

So if it discovered powerful arguments for misalignment, it would make sure to explain them in a way that the humans fully understand those arguments. But if humans had some prior/bias that caused them to dismiss the argument even when they fully understand it, the AI would not try and optimize around that.

Max’s stopgap plan would involve comparing the human’s values to what they’d be if the AI did nothing. I think he understates how bad that stopgap plan is. Even providing straightforwardly-true factual information can change what a person wants, right?

Yes. That's right. And I am (among other things) worried about an AI that warps my values by telling me a series of facts.

But I want to clarify that I'm talking about terminal values, not strategic sub-goals. The stopgap plan is 100% able to tell me that the store is closed, thus changing my plan of going to the store. What it shouldn't do is tell me intense stories about the suffering of pigs and thereby change how much I care about pigs.[1]

Why do you think this stopgap is so bad? (I agree that it's bad, but it seems like you see it as worse than me.)

- ^

Unless this story is necessary to counteract another pressure such that I cleave closer to the null-action counterfactual.

Hmm. I guess when I wrote that part, I was imagining a kind of dichotomy, where one branch is “don’t change what the human is trying to do right now” (and then the AI wouldn’t say that the store is closed), and the other branch is “don’t change what the human would want after infinite ideal reflection” (and then I don’t know how to install that motivation into the particular AI architecture I have in mind).

I guess you’re saying that that’s a false dichotomy, because there’s a middle ground in between those? Have you written more about what constitutes a “terminal goal” in your view? (Even if you don’t have a rigorous definition, I’m interested in examples or intuitions.) Thanks.

I haven't written at length about the distinction between terminal and instrumental goals myself (there's a bit at the start of CAST, but I don't belabor it), but I think Eliezer did a good job in 2007. In my own words, I would say that it makes some sense to divide the planning system of the mind into a portion that is a model of the world, where it makes sense to talk about truth and so on, and another section of the mind that is about judging the desirability of various potential world states and/or trajectories. That second portion (or an important component of it) is what I would call the "values" of the agent, and when the values are put in contact with concrete outcomes that are judged highly compared to others, I would call them terminal goals. Instrumental goals are then constructed as a second-order operation on top of one or more terminal goals (and the dynamics of the world model), so that we can shortcut planning as a question of how to first get the instrumental goal so that we can later move from that state to the terminal goal.

As a concrete example, I wanted to go home after work last night (which is itself an instrumental goal in the service of many other terminal goals, such as comfort, but which we can treat as terminal). I planned to drive in my car through a small town to get home, and thus steered my car towards the town, because "get to the town" was instrumental to my (more) terminal goal. As I approached, I found out that there had been an accident and that the road was closed. If my world model had included this fact, I would not have identified "get to the town" as an instrumental goal. Once I was aware of it, I changed my plan so that I drove down a country detour that went around the town.

I do not consider learning about the accident to have changed my values or the way that I judged outcomes. Instead, it changed my plan.

Does that make sense?

Thanks. I just edited the OP to say that my original text might be an overstatement.

I still think the stopgap plan doesn’t help me-in-particular, because I’m working on how to install goals in brain-like AGIs, and I have ideas that seem promising but only work for a limited number of goals (they kinda have to be simple, concrete, “atomic”, and/or directly related to people’s feelings, and/or have a ground truth that can be calculated explicitly, more-or-less). This thing we’re talking about here (involving a distinction between the supervisor’s instrumental vs terminal goals) is pretty complex and abstract, and not something I have any good idea of how to install as a goal / motivation, alas.

LLMs are pretty different, no comment on that.

1.1 Tl;dr

Alignment is often conceptualized as AIs helping humans achieve their goals: AIs that increase people’s agency and empowerment; AIs that are helpful, corrigible, and/or obedient; AIs that avoid manipulating people. But that last one—manipulation—points to a challenge for all these desiderata: a human’s goals are themselves under-determined and manipulable, and it’s awfully hard to pin down a principled distinction between changing people’s goals in a good way (“providing counsel”, “providing information”, “sharing ideas”) versus a bad way (“manipulating”, “brainwashing”).

The manipulability of human desires is hardly a new observation in the alignment literature, but it remains unsolved (see lit review in §3 below).

In this post I will propose an explanation of how we humans intuitively conceptualize the distinction between guidance (good) vs manipulation (bad), in case it helps us brainstorm how we might put that distinction into AI.

…But (spoiler alert) it turns out not to really help, because I’ll argue that we humans think about it in a deeply incoherent way, intimately tied to our scientifically-inaccurate intuitions around free will.

I jump from there into a broader review of every approach that I can think of for writing a “True Name” for manipulation or things related to it (empowerment, agency, corrigibility, culpability, etc.), or indeed for any other method of robustly getting future AGIs to be able to talk to people without trying to manipulate those people’s desires. I argue that none of them provides much of a path forward on the particular technical alignment problem I’m working on. Indeed, my current guess is that none of these things have a “True Name” at all, or at least not one that’s useful for the technical alignment problem.

1.2 Bigger-picture context: why is this issue so important to me?

I’ve been investigating brain-like-AGI safety plans that would involve making AGI with a motivation system loosely inspired by the prosocial aspects of human motivation. To oversimplify a bit, this kind of motivation system would include an impersonal-consequentialist aspect (related to what I call “Sympathy Reward”) that leads to wanting humans (and perhaps animals etc.) to feel more pleasure and less displeasure. But by itself, this part would make a funny kind of ruthless sociopath ASI that bliss-maxxes by, say, strapping everyone to tables on heroin drips. Or maybe it would just kill us all and tile the universe with hedonium. Granted, bliss-maxxing is not the worst possible future, as these things go. But we should aim higher!

So then the second ingredient in the motivation system would be a kinda virtue-ethics-y thing, related to what I call “Approval Reward”, which has more relation to pride, self-image, respecting other people’s preferences, and proudly internalizing social norms.

Alas, my current thinking is a bit akin to the “Nearest Unblocked Strategy” problem. If we put both those things together—a consequentialist desire plus a suite of virtue-ethics-y motivations—I’m worried that the consequentialist desire will eventually “win”. For example, if the AGI wants to eventually get to hedonium, and the AGI also wants to follow societal norms, it might find its way to hedonium via a more gradual route, one that involves gradually and unintentionally, but inexorably, changing societal norms in the direction of hedonium.[1] The virtue-ethics-y motivation just seems more squishy and slippery than the consequentialist desire, especially when it routes through manipulable human desires, such that I’m worried it will not be an adequate bulwark against ruthless consequentialism.

…Or maybe it would be fine? I’m not sure. But I’m very much on the hunt for some different or complementary approach to AI motivation, one that I can reason about more easily and have more confidence in.

So in that context, it would be nice to pin down some notion of “manipulation”, “respect for preferences”, and related notions, in a robust, well-defined way, that’s robust to specification-gaming and especially ontological crises.

For related discussion, see @johnswentworth’s discussion of “True Names” at “Why Agent Foundations? An Overly Abstract Explanation” (2022), or my own “Perils of under- vs over-sculpting AGI desires” (2025), specifically §8.2.2: “The hope of pinning down non-fuzzy concepts for the AGI to desire”.

2. How do humans intuitively define empowerment, agency, manipulation, etc.?

2.1 Background: human “free will” intuitions

Here’s a modified excerpt from my Intuitive Self Models (ISM) series, summarizing a few key points from ISM Post 3: The Active Self:

More precisely: If there are deterministic upstream explanations of what the Active Self is doing and why, e.g. via algorithmic or other mechanisms happening under the hood, then that feels like a complete undermining of one’s free will and agency. And if there are probabilistic upstream explanations of what the Active Self is doing and why, e.g. “if my stomach is empty, then I’ll start wanting food”, then that correspondingly feels like a partial undermining of free will and agency, in proportion to how confident those predictions are. For example, I might see myself as being somewhat “puppeteered” by the ghrelin hormone that my empty stomach is pumping into my bloodstream.

…Needless to say, this whole intuitive ontology is pretty messed up, in the sense that nothing in it is a veridical, observer-independent accounting of what is happening in the real world (ISM §3.3.3). And indeed, it’s somewhat specific to mainstream western culture (ISM §3.2). Outside of “mainstream western culture”, we find that other intuitive ontologies also exist; I won’t discuss them in this post since I don’t understand them very well, but I’m currently pessimistic that they will help solve my AI-alignment-related problems.[2]

2.2 Our free-will-infused intuitive notions of empowerment, agency, manipulation, corrigibility, responsibility, etc.

I think our common-sense notions of empowerment, agency, manipulation, corrigibility, and so on are intimately tied with this free-will-related intuitive ontology. In particular, I claim:

Our intuitive notion of empowerment is related to someone's acausal free will being able to accomplish whatever it wants to accomplish. Our intuitive notion of agency (in the context of e.g. “AI will enhance human agency”) is pretty similar.

Our intuitive notion of being manipulated is related to a person (call him Ahmed) taking an action A with the property that, in our intuitive causal world-models, the chain-of-causation leading to A does not ultimately trace back to the acausal force of Ahmed’s free will, but rather to the free will of some third-party who manipulated Ahmed.

(For example, if Bob deceives me about what a button does, and then I press the button, then our intuitive conceptualization of the situation says that the button was pressed ultimately because of Bob’s acausal free will working towards Bob’s desires, not because of my acausal free will working towards my desires. I was an instrument to Bob.)

Our intuitive notions of corrigibility, helpfulness, and obedience each have their own nuances, but they all substantially overlap with the above ideas: they connote increasing a supervisor’s empowerment and agency, and decreasing the amount that the supervisor gets manipulated. In other words: they suggest that important things are happening more as a result of the supervisor’s free will doing what it wants, and less as a result of other people’s (or AIs’) free wills doing what they want through the supervisor’s own actions.

For example, if a human wants to shut down an AI, the AI could prevent that by disabling the shutdown button, or the AI could prevent that by using its silver tongue to convince the human to not want to shut it down. Both of these would be contrary to what people normally mean by “corrigibility”, and in the latter case we conceptualize that as an undermining of the supervisor’s free will.

Our intuitive notions of culpability and responsibility, as in “Joe is responsible for the failure”, involves tracing back the chain-of-causation to see whose acausal force of free will it ultimately traces back to. This is kinda the flip side of manipulation (above): if I trick someone into unknowingly robbing a bank, or brainwash them into wanting to rob the bank, I would be at least partly and maybe fully responsible for the bank-robbing, because the bank got robbed ultimately because of my acausal free will, which wanted the bank to be robbed.

2.3 Another dimension: “counsel” vs “manipulation” as an emotive conjugation

There’s another dimension to how we intuitively think about these concepts: the dimension of positive or negative vibes. For example, if some kind of interaction seems good,[3] then we’re more likely to call it “providing counsel”, and if it seems bad, then we’re more likely to call it “an attempt to manipulate me”. The vibe is important in itself, over and above any particular aspect of the interaction.

I don’t think this dimension is separate from the “free will” discussion above, but rather complementary and compatible, because in general, if I have a motivation I’m happy about, I’ll tend to conceptualize it as an ego-syntonic component of my free will, while if I have a motivation I’m unhappy about, I'll tend to conceptualize it as an ego-dystonic urge undermining my free will. See ISM §3.5.4 for details.

3. If the intuitive definitions of “manipulation” etc. reside in a messed-up ontology, has the alignment literature found any alternative, better way to define these concepts?

By analogy, I think intuitive physics is a messed-up ontology in certain (far more minor) ways, and yet many intuitive physics concepts can be (imperfectly) mapped to rigorously-definable concepts in real physics. Can we find something like that for “manipulation”, “empowerment”, and so on, and then build those concepts into AI motivations?

Alas, as far as I can tell, that’s an unsolved problem, and might not have a solution at all. Here’s a brief lit review:

3.1 Compare what the human wants to what the human would want under the null policy?

First, @Max Harms in “Formal Faux Corrigibility” (2024) acknowledges that he doesn’t know how to formally define a distinction between counsel (good) vs manipulation (bad), and suggests as a stopgap to simply penalize the AI for doing either. (“This seems like a bad choice in that it discourages the AI from taking actions which help us update in ways that we reflectively desire, even when those actions are as benign as talking about the history of philosophy. Alas, I don’t currently know of a better formalism. Additional work is surely needed in developing a good measure of the kind of value modification that we don’t like while still leaving room for the kind of growth and updating that we do like.”)

Max’s stopgap plan would involve comparing the human’s values to what they’d be if the AI did nothing. I think he understates how bad that stopgap plan is. Even providing straightforwardly-true factual information can change what a person wants, right? (Update: maybe that’s an overstatement, see Max’s reply in the comments.)

Alternatively, one could take as a baseline what the human would eventually figure out on their own, given infinite time and good circumstances under which to reflect. I.e., we could say that an AI is “manipulating” if they’re pushing the person away from the conclusions of their imagined idealized copy with infinite time, and the AI is “providing counsel” if they’re pushing the person towards that. I have some concerns,[4] but yeah sure, that seems worth considering. Alas, it doesn’t solve my problem, because I have no idea what reward function, training environment, etc., could directly lead to a brain-like AGI with that (rather abstract) motivation.

3.2 The AI learns self-empowerment and generalizes to other-empowerment?

Another example is @jacob_cannell in Empowerment is (almost) All We Need (2022). To his credit, he raises the issue that human desires are manipulable in the section “Potential Cartesian Objections”. But then he waves this issue away in a brief sentence: “These cartesian objections are future relevant, but ultimately they don't matter much for AI safety because powerful AI systems - even those of human-level intelligence - will likely need to overcome these problems regardless.”

I think Jacob is suggesting that the AGI will autonomously develop a robust notion of self-empowerment, including “what it means for me (the AGI) to not get manipulated”, and then it can (somehow?) transfer that notion to humans.

If so, I’m skeptical. The main failure mode that I expect is “ruthless consequentialist AGI”, and this story really doesn’t apply there. If the AGI wants there to be paperclips, then it will instrumentally want to avoid getting ‘manipulated’, in the trivial sense that if it stops wanting paperclips then there will be fewer paperclips. This AGI would not face anything remotely analogous to the conundrum that humans don’t really know what they want for the long-term future, and are figuring it out, and when they say ‘manipulation is bad’ they are expressing some hard-to-pin-down preference about how this process of self-discovery plays out. Compare that to the AGI, which does not want self-discovery, it just wants paperclips. See also: §0.3 of my post “6 reasons why “alignment-is-hard” discourse seems alien to human intuitions, and vice-versa”.

(Update: See Jacob’s reply in the comments.)

3.3 “Vingean agency”?

Yet another example is @abramdemski’s “Vingean agency” (2022) (following earlier work by Yudkowsky). He starts from a place very close to the human intuitions in §2 above, i.e. “agency” is when you can predict the outcome but not the actions leading to those outcomes. Then he hints at an intriguing idea that maybe we can just make that formal! I.e., maybe I was too quick to dismiss that kind of thing above using terms like “messed-up ontology”. (As Abram writes: “I also think it's possible that Vingean agency can be extended to be ‘the’ definition of agency, if we think that agency is just Vingean agency from some perspective….”)

By analogy, to borrow an example from @johnswentworth, thermodynamics concepts like “temperature” are tied to imperfect modeling ability (since an omniscient observer would instead track the velocity of every particle). So why can’t “agency” be tied to imperfect modeling ability too?

But alas, even if we can rigorously define Vingean agency, I don’t think it would really help with the problem I want it to solve here, i.e. pinning down a distinction between good “counsel” vs bad “manipulation”. Vingean agency seems to solve the problem of identifying an agent trying to do something, by noticing easier-to-predict ends happening by harder-to-predict means. But the “manipulation” concept worries about the possibility of intervention upstream of a person’s ego-syntonic desires. If the AI can brainwash me into deeply wanting to maximize paperclips, and then I execute a clever plan to maximize paperclips, then I would still be a Vingean agent, as long as my clever plan was sufficiently clever (from some perspective). So the brainwashing would strip me of my intuitive agency, but not my Vingean agency.

3.4 The AI doesn’t care about (is not optimizing for) what the human winds up wanting?

Another potential approach would be to define optimization more broadly (e.g. “The Ground of Optimization”, @Alex Flint 2020), and ask whether there’s optimization in the AI towards what the human winds up deciding or wanting. The idea would be: we want the AI to provide us with relevant information, but to have no opinion either way about what we ultimately wind up wanting. We might wind up changing our desires as a result of the information, but (the story goes) it’s better that the information was not optimized to make us change our desires in a specific way.

This approach aligns pretty well with the human intuitions in §2.2 above, and more generally has a lot going for it! But alas, I currently think the most important use-case for AGI is figuring out true important things about the world (esp. related to ASI alignment and strategy) and explaining those things to the human. For this process to be effective, we cannot have an AGI that’s unconcerned with what we wind up believing after the discussion—that’s a recipe for slop-and-doom, or just an AI that’s incomprehensible and unhelpful. Rather, I want the AI to be like a disagreeable nerd that wants us to have a good understanding, notices areas where we’re confused, and is brainstorming and strategizing on how to help set us straight by improving its clarity and pedagogy. This strategizing is clearly a form of optimization, and the target of the optimization is related to the human’s eventual desires (well, it’s nominally about the human’s beliefs, but beliefs and desires are entangled), and I really think we need this kind of optimization to survive the transition to ASI.

In other words, I don’t think a brain-like AGI can successfully explain something novel and unintuitive to somebody, without caring whether the person winds up understanding it.

So this plan is out too.

3.5 Impact minimization?

Next idea: Perhaps we could rely on some notion of impact-minimization (1,2), on the grounds that changing a human’s goals has unusually large downstream impacts? For example, I would put “Instrumental Goals Are A Different And Friendlier Kind Of Thing Than Terminal Goals” (2025) by @johnswentworth and @David Lorell into this general category.

But alas, that can’t distinguish good counsel from bad manipulation, since both affect the human’s goals. As mentioned in §3.1 above, even telling a person straightforward true facts can change what they’re trying to do, in a high-impact way.

3.6 Attainable utility preservation?

“Attainable Utility Preservation” and related ideas seem to all be rooted in the messed-up ontology where agents are free to choose what to do, instead of their decisions themselves having upstream causes. So it doesn’t seem to help me here.

4. Even more ideas (that don’t really solve my problem)

That’s all I can think of that’s directly in the alignment literature, but let’s keep brainstorming!

4.1 Game theory and incentive design?

At least some of the social intuitions under discussion can be justified in a framework of game-theoretic equilibria. For example, our concept “culpability” overlaps with “a system of punishment which will set up incentives such that the end-result is overall good”. Alas, game theory tends to take for granted that people have terminal goals, and doesn’t seem to offer a useful framework for thinking about people changing each other’s terminal goals in good ways versus bad.

4.2 The person’s judgments of what kinds of interactions are good vs bad?

In §2.3, I mentioned that a big part of how we think about “counsel vs manipulation” is simply a gestalt feeling that some interaction is good vs bad.

That suggests an approach where AIs simply learn to make a human-like gestalt assessment of what’s good vs bad (according to humans in general, and according to this particular human specifically), and then do the good things but not the bad things. Not manipulating people would, we imagine, naturally come along for the ride.

If we do something like that, I wouldn’t think of it as a solution to the True Name thing, but rather giving up on the True Name thing in favor of a different approach entirely, one relying more directly on (real or simulated) human judgment. See e.g. “Act-based approval-directed agents”, for IDA skeptics.

This kind of approach will probably sound like the very obvious solution for readers who work on LLMs. No comment on LLMs, but for the problem I’m working on (brain-like AGI), it just brings me right back to where I started in §1.2: if we’re learning what’s good by the gestalt of human judgment and culture, and if human judgment and culture can themselves be gradually shifted over time, then this might not be an adequate bulwark against the AGI’s consequentialist desires. (And I do think we need the AGI to have some consequentialist desires.)

4.3 “It’s a messed-up ontology, but who cares?”

I care! The problem I see is: we should generically expect AGI (and even more, ASI) to eventually wind up with true beliefs, and with concepts that closely track the world as it really is. And its desires will be connected to those concepts seeming good or bad.

Basically, the better you’re able to model someone, the less coherent is the idea that they are expressing their agency, that they’re empowered, that you are or aren’t manipulating them, etc. Why? Because their decisions, and even their deepest, truest desires, really are downstream of their manipulable environment, situation, biology, etc.

By analogy, when you’re writing traditional UI code or balancing a pile of rocks, there isn’t really any notion of “letting the system self-actualize” or whatever. You can choose not to think about what the consequences of your coding or rock-balancing activities will be, but that’s different (see §3.4 above). And I suspect that increasingly-competent AGIs will increasingly see humans in a similar manner: they, including their “free will”, are just another real-world system that gets pushed around by circumstance, and which will predictably respond to interventions like anything else.

5. …But doesn’t this analysis equally “disprove” the possibility of human helpfulness?

And yet! Humans can be robustly helpful, right? Can we be inspired by that?

Well, one hopeful proposal would be to say: we humans are still generally using the “messed-up ontology” containing free will intuitions! Even while some of us intellectually acknowledge that the ontology is messed-up … we keep using it anyway! And gee, look at all the stuff we humans have gotten done, in terms of science, technology, governance, philosophy, etc. Maybe a “baby AGI” will develop free will intuitions for the same reasons we do, and could likewise get quite far within the messed-up ontology, without having any issues. Maybe it could get far enough to end the “acute risk period”.