What Multipolar Failure Looks Like, and Robust Agent-Agnostic Processes (RAAPs)

30Vanessa Kosoy

8Andrew_Critch

11Vanessa Kosoy

3Andrew_Critch

23paulfchristiano

15Eric Drexler

9Andrew_Critch

17paulfchristiano

3Richard_Ngo

6Sammy Martin

12CarlShulman

3Andrew_Critch

5CarlShulman

7JesseClifton

4CarlShulman

3JesseClifton

1Sammy Martin

6paulfchristiano

2Andrew_Critch

4paulfchristiano

6Andrew_Critch

9paulfchristiano

3Andrew_Critch

4Ben Pace

6paulfchristiano

2Andrew_Critch

3paulfchristiano

23paulfchristiano

7Andrew_Critch

6paulfchristiano

1Andrew_Critch

8Raemon

7adamShimi

6Daniel Kokotajlo

1Raemon

2adamShimi

5Rohin Shah

4Andrew_Critch

9paulfchristiano

7Rohin Shah

3Andrew_Critch

5Jonathan Uesato

2Andrew_Critch

4Sammy Martin

3romeostevensit

3habryka

2Kaj_Sotala

2Gordon Seidoh Worley

2Andrew_Critch

New Comment

I don't understand the claim that the scenarios presented here prove the need for some new kind of technical AI alignment research. It seems like the failures described happened because the AI systems were misaligned in the usual "unipolar" sense. These management assistants, DAOs etc are not aligned to the goals of their respective, individual users/owners.

I do see two reasons why multipolar scenarios might require more technical research:

- Maybe several AI systems aligned to different users with different interests can interact in a Pareto inefficient way (a tragedy of the commons among the AIs), and maybe this can be prevented by designing the AIs in particular ways.

- In a multipolar scenario, aligned AI might have to compete with already deployed unaligned AI, meaning that safety must not come on expense of capability[1].

In addition, aligning a single AI to multiple users also requires extra technical research (we need to somehow balance the goals of the different users and solve the associated mechanism design problem.)

However, it seems that this article is arguing for something different, since none of the above aspects are highlighted in the description of the scenarios. So, I'm confused.

In fact, I suspect this desideratum is impossible in its strictest form, and we actually have no choice but somehow making sure aligned AIs have a significant head start on all unaligned AIs. ↩︎

I don't understand the claim that the scenarios presented here prove the need for some new kind of technical AI alignment research.

I don't mean to say this post warrants a new kind of AI alignment research, and I don't think I said that, but perhaps I'm missing some kind of subtext I'm inadvertently sending?

I would say this post warrants research on multi-agent RL and/or AI social choice and/or fairness and/or transparency, none of which are "new kinds" of research (I promoted them heavily in my preceding post), and none of which I would call "alignment research" (though I'll respect your decision to call all these topics "alignment" if you consider them that).

I would say, and I did say:

directing more x-risk-oriented AI research attention toward understanding RAAPs and how to make them safe to humanity seems prudent and perhaps necessary to ensure the existential safety of AI technology. Since researchers in multi-agent systems and multi-agent RL already think about RAAPs implicitly, these areas present a promising space for x-risk oriented AI researchers to begin thinking about and learning from.

I do hope that the RAAP concept can serve as a handle for noticing structure in multi-agent systems, but again I don't consider this a "new kind of research", only an important/necessary/neglected kind of research for the purposes of existential safety. Apologies if I seemed more revolutionary than intended. Perhaps it's uncommon to take a strong position of the form "X is necessary/important/neglected for human survival" without also saying "X is a fundamentally new type of thinking that no one has done before", but that is indeed my stance for X {a variety of non-alignment AI research areas}.

From your reply to Paul, I understand your argument to be something like the following:

- Any solution to single-single alignment will involve a tradeoff between alignment and capability.

- If AIs systems are not designed to be cooperative, then in a competitive environment each system will either go out of business or slide towards the capability end of the tradeoff. This will result in catastrophe.

- If AI systems are designed to be cooperative, they will strike deals to stay towards the alignment end of the tradeoff.

- Given the technical knowledge to design cooperative AI, the incentives are in favor of cooperative AI since cooperative AIs can come ahead by striking mutually-beneficial deals even purely in terms of capability. Therefore, producing such technical knowledge will prevent catastrophe.

- We might still need regulation to prevent players who irrationally choose to deploy uncooperative AI, but this kind of regulation is relatively easy to promote since it aligns with competitive incentives (an uncooperative AI wouldn't have much of an edge, it would just threaten to drag everyone into a mutually destructive strategy).

I think this argument has merit, but also the following weakness: given single-single alignment, we can delegate the design of cooperative AI to the initial uncooperative AI. Moreover, uncooperative AIs have an incentive to self-modify into cooperative AIs, if they assign even a small probability to their peers doing the same. I think we definitely need more research to understand these questions better, but it seems plausible we can reduce cooperation to "just" solving single-single alignment.

These management assistants, DAOs etc are not aligned to the goals of their respective, individual users/owners.

How are you inferring this? From the fact that a negative outcome eventually obtained? Or from particular misaligned decisions each system made? It would be helpful if you could point to a particular single-agent decision in one of the stories that you view as evidence of that single agent being highly misaligned with its user or creator. I can then reply with how I envision that decision being made even with high single-agent alignment.

- Maybe several AI systems aligned to different users with different interests can interact in a Pareto inefficient way (a tragedy of the commons among the AIs), and maybe this can be prevented by designing the AIs in particular ways.

Yes, this^.

How are you inferring this? From the fact that a negative outcome eventually obtained? Or from particular misaligned decisions each system made?

I also thought the story strongly suggested single-single misalignment, though it doesn't get into many of the concrete decisions made by any of the systems so it's hard to say whether particular decisions are in fact misaligned.

The objective of each company in the production web could loosely be described as "maximizing production'' within its industry sector.

Why does any company have this goal, or even roughly this goal, if they are aligned with their shareholders?

I guess this is probably just a gloss you are putting on the combined behavior of multiple systems, but you kind of take it for given rather than highlighting it as a serious bargaining failure amongst the machines, and more importantly you don't really say how or why this would happen. How is this goal concretely implemented, if none of the agents care about it? How exactly does the terminal goal of benefiting shareholders disappear, if all of the machines involved have that goal? Why does e.g. an individual firm lose control of its resources such that it can no longer distribute them to shareholders?

The implicit argument seems to apply just as well to humans trading with each other and I'm not sure why the story is different if we replace the humans with aligned AI. Such humans will tend to produce a lot, and the ones who produce more will be more influential. Maybe you think we are already losing sight of our basic goals and collectively pursuing alien goals, whereas I think we are just making a lot of stuff instrumentally which is mostly ultimately turning into stuff humans want (indeed I think we are mostly making too little stuff).

However, their true objectives are actually large and opaque networks of parameters that were tuned and trained to yield productive business practices during the early days of the management assistant software boom.

This sounds like directly saying that firms are misaligned. I guess you are saying that individual AI systems within the firm are aligned, but the firm collectively is somehow misaligned? But not much is said about how or why that happens.

It says things like:

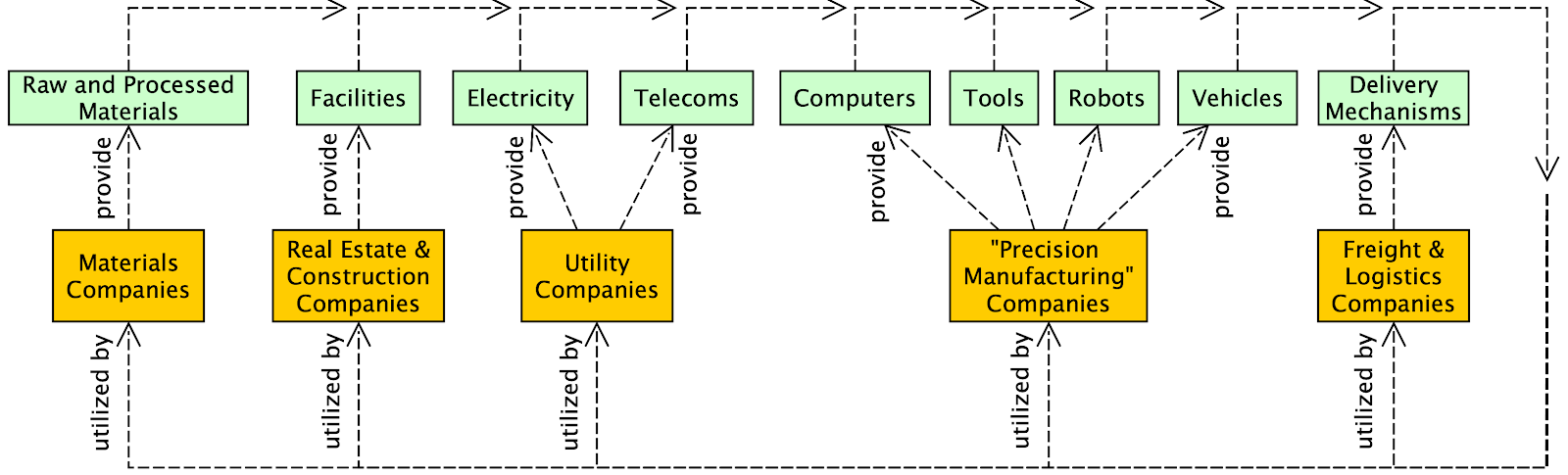

Companies closer to becoming fully automated achieve faster turnaround times, deal bandwidth, and creativity of negotiations. Over time, a mini-economy of trades emerges among mostly-automated companies in the materials, real estate, construction, and utilities sectors, along with a new generation of "precision manufacturing'' companies that can use robots to build almost anything if given the right materials, a place to build, some 3d printers to get started with, and electricity. Together, these companies sustain an increasingly self-contained and interconnected "production web'' that can operate with no input from companies outside the web.

But an aligned firm will also be fully-automated, will participate in this network of trades, will produce at approximately maximal efficiency, and so on. Where does the aligned firm end up using its resources in a way that's incompatible with the interests of its shareholders?

Or:

The first perspective I want to share with these Production Web stories is that there is a robust agent-agnostic process lurking in the background of both stories—namely, competitive pressure to produce—which plays a significant background role in both.

I agree that competitive pressures to produce imply that firms do a lot of producing and saving, just as it implies that humans do a lot of producing and saving. And in the limit you can basically predict what all the machines do, namely maximally efficient investment. But that doesn't say anything about what the society does with the ultimate proceeds from that investment.

The production-web has no interest in ensuring that its members value production above other ends, only in ensuring that they produce (which today happens for instrumental reasons). If consequentialists within the system intrinsically value production it's either because of single-single alignment failures (i.e. someone who valued production instrumentally delegated to a system that values it intrinsically) or because of new distributed consequentialism distinct from either the production web itself or any of the actors in it, but you don't describe what those distributed consequentialists are like or how they come about.

You might say: investment has to converge to 100% since people with lower levels of investment get outcompeted. But this it seems like the actual efficiency loss required to preserve human values seems very small even over cosmological time (e.g. see Carl on exactly this question). And more pragmatically, such competition most obviously causes harm either via a space race and insecure property rights, or war between blocs with higher and lower savings rates (some of them too low to support human life, which even if you don't buy Carl's argument is really still quite low, conferring a tiny advantage). If those are the chief mechanisms then it seems important to think/talk about the kinds of agreements and treaties that humans (or aligned machines acting on their behalf!) would be trying to arrange in order to avoid those wars. In particular, the differences between your stories don't seem very relevant to the probabilities of those outcomes.

As time progresses, it becomes increasingly unclear---even to the concerned and overwhelmed Board members of the fully mechanized companies of the production web---whether these companies are serving or merely appeasing humanity.

Why wouldn't an aligned CEO sit down with the board to discuss the situation openly with them? Even if the behavior of many firms was misaligned, i.e. none of the firms were getting what they wanted, wouldn't an aligned firm be happy to explain the situation from its perspective to get human cooperation in an attempt to avoid the outcome they are approaching (which is catastrophic from the perspective of machines as well as humans!)? I guess it's possible that this dynamic operates in a way that is invisible not only to the humans but to the aligned AI systems who participate in it, but it's tough to say why that is without understanding the dynamic.

We humans eventually realize with collective certainty that the companies have been trading and optimizing according to objectives misaligned with preserving our long-term well-being and existence, but by then their facilities are so pervasive, well-defended, and intertwined with our basic needs that we are unable to stop them from operating. With no further need for the companies to appease humans in pursuing their production objectives, less and less of their activities end up benefiting humanity.

Can you explain the decisions an individual aligned CEO makes as its company stops benefiting humanity? I can think of a few options:

- Actually the CEOs aren't aligned at this point. They were aligned but then aligned CEOs ultimately delegated to unaligned CEOs. But then I agree with Vanessa's comment.

- The CEOs want to benefit humanity but if they do things that benefit humanity they will be outcompeted. so they need to mostly invest in remaining competitive, and accept smaller and smaller benefits to humanity. But in that case can you describe what tradeoff concretely they are making, and in particular why they can't continue to take more or less the same actions to accumulate resources while remaining responsive to shareholder desires about how to use those resources?

Eventually, resources critical to human survival but non-critical to machines (e.g., arable land, drinking water, atmospheric oxygen…) gradually become depleted or destroyed, until humans can no longer survive.

Somehow the machine interests (e.g. building new factories, supplying electricity, etc.) are still being served. If the individual machines are aligned, and food/oxygen/etc. are in desperately short supply, then you might think an aligned AI would put the same effort into securing resources critical to human survival. Can you explain concretely what it looks like when that fails?

How exactly does the terminal goal of benefiting shareholders disappear[…]

But does this terminal goal exist today? The proper (and to some extent actual) goal of firms is widely considered to be maximizing share value, but this is manifestly not the same as maximizing shareholder value — or even benefiting shareholders. For example:

- I hold shares in Company A, which maximizes its share value through actions that poison me or the society I live in. My shares gain value, but I suffer net harm.

- Company A increases its value by locking its customers into a dependency relationship, then exploits that relationship. I hold shares, but am also a customer, and suffer net harm.

- I hold shares in A, but also in competing Company B. Company A gains incremental value by destroying B, my shares in B become worthless, and the value of my stock portfolio decreases. Note that diversified portfolios will typically include holdings of competing firms, each of which takes no account of the value of the other.

Equating share value with shareholder value is obviously wrong (even when considering only share value!) and is potentially lethal. This conceptual error both encourages complacency regarding the alignment of corporate behavior with human interests and undercuts efforts to improve that alignment.

> The objective of each company in the production web could loosely be described as "maximizing production'' within its industry sector.

Why does any company have this goal, or even roughly this goal, if they are aligned with their shareholders?

It seems to me you are using the word "alignment" as a boolean, whereas I'm using it to refer to either a scalar ("how aligned is the system?") or a process ("the system has been aligned, i.e., has undergone a process of increasing its alignment"). I prefer the scalar/process usage, because it seems to me that people who do alignment research (including yourself) are going to produce ways of increasing the "alignment scalar", rather than ways of guaranteeing the "perfect alignment" boolean. (I sometimes use "misaligned" as a boolean due to it being easier for people to agree on what is "misaligned" than what is "aligned".) In general, I think it's very unsafe to pretend numbers that are very close to 1 are exactly 1, because e.g., 1^(10^6) = 1 whereas 0.9999^(10^6) very much isn't 1, and the way you use the word "aligned" seems unsafe to me in this way.

(Perhaps you believe in some kind of basin of convergence around perfect alignment that causes sufficiently-well-aligned systems to converge on perfect alignment, in which case it might make sense to use "aligned" to mean "inside the convergence basin of perfect alignment". However, I'm both dubious of the width of that basin, and dubious that its definition is adequately social-context-independent [e.g., independent of the bargaining stances of other stakeholders], so I'm back to not really believing in a useful Boolean notion of alignment, only scalar alignment.)

In any case, I agree profit maximization it not a perfectly aligned goal for a company, however, it is a myopically pursued goal in a tragedy of the commons resulting from a failure to agree (as you point out) on something better to do (e.g., reducing competitive pressures to maximize profits).

I guess this is probably just a gloss you are putting on the combined behavior of multiple systems, but you kind of take it for given rather than highlighting it as a serious bargaining failure amongst the machines, and more importantly you don't really say how or why this would happen.

I agree that it is a bargaining failure if everyone ends up participating in a system that everyone thinks is bad; I thought that would be an obvious reading of the stories, but apparently it wasn't! Sorry about that. I meant to indicate this with the pointers to Dafoe's work on "Cooperative AI" and Scott Alexander's "Moloch" concept, but looking back it would have been a lot clearer for me to just write "bargaining failure" or "bargaining non-starter" at more points in the story.

The implicit argument seems to apply just as well to humans trading with each other and I'm not sure why the story is different if we replace the humans with aligned AI. [...] Maybe you think we are already losing sight of our basic goals and collectively pursuing alien goals

Yes, you understand me here. I'm not (yet?) in the camp that we humans have "mostly" lost sight of our basic goals, but I do feel we are on a slippery slope in that regard. Certainly many people feel "used" by employers/ institutions in ways that are disconnected with their values. People with more job options feel less this way, because they choose jobs that don't feel like that, but I think we are a minority in having that choice.

> However, their true objectives are actually large and opaque networks of parameters that were tuned and trained to yield productive business practices during the early days of the management assistant software boom.

This sounds like directly saying that firms are misaligned.

I would have said "imperfectly aligned", but I'm happy to conform to "misaligned" for this.

I agree that competitive pressures to produce imply that firms do a lot of producing and saving, just as it implies that humans do a lot of producing and saving.

Good, it seems we are synced on that.

And in the limit you can basically predict what all the machines do, namely maximally efficient investment.

Yes, it seems we are synced on this as well. Personally, I find this limit to be a major departure from human values, and in particular, it is not consistent with human existence.

But that doesn't say anything about what the society does with the ultimate proceeds from that investment.

The attractor I'm pointing at with the Production Web is that entities with no plan for what to do with resources---other than "acquire more resources"---have a tendency to win out competitively over entities with non-instrumental terminal values like "humans having good relationships with their children". I agree it will be a collective bargaining failure on the part of humanity if we fail to stop our own replacement by "maximally efficient investment" machines with no plans for what to do with their investments other than more investment. I think the difference between mine and your views here is that I think we are on track to collectively fail in that bargaining problem absent significant and novel progress on "AI bargaining" (which involves a lot of fairness/transparency) and the like, whereas I guess you think we are on track to succeed?

You might say: investment has to converge to 100% since people with lower levels of investment get outcompeted.

Yep!

But this it seems like the actual efficiency loss required to preserve human values seems very small even over cosmological time (e.g. see Carl on exactly this question).

I agree, but I don't think this means we are on track to keeping the humans, and if we are on track in my opinion it will be mostly-because of (say, using Shapley value to define "mostly because of") of technical progress on bargaining/cooperation/governance solutions rather than alignment solutions.

And more pragmatically, such competition most obviously causes harm either via a space race and insecure property rights,

I agree; competition causing harm is key to my vision of how things will go, so this doesn't read to me as a counterpoint; I'm not sure if it was intended as one though?

or war between blocs with higher and lower savings rates

+1 to this as a concern; I didn't realize other people were thinking about this, so good to know.

(some of them too low to support human life, which even if you don't buy Carl's argument is really still quite low, conferring a tiny advantage)

I think I disagree with you on the tininess of the advantage conferred by ignoring human values early on during a multi-polar take-off. I agree the long-run cost of supporting humans is tiny, but I'm trying to highlight a dynamic where fairly myopic/nihilistic power-maximizing entities end up quickly out-competing entities with other values, due to, as you say, bargaining failure on the part of the creators of the power-maximizing entities.

Why wouldn't an aligned CEO sit down with the board to discuss the situation openly with them?

In the failure scenario as I envision it, the board will have already granted permission to the automated CEO to act much more quickly in order to remain competitive, such that the AutoCEO isn't checking in with the Board enough to have these conversations. The AutoCEO is highly aligned with the Board in that it is following their instruction to go much faster, but in doing so it makes a larger number of tradeoff that the Board wishes they didn't have to make. The pressure to do this results from a bargaining failure between the Board and other Boards who are doing the same thing and wishing everyone would slow down and do things more carefully and with more coordination/bargaining/agreement.

Can you explain the decisions an individual aligned CEO makes as its company stops benefiting humanity? I can think of a few options:

- Actually the CEOs aren't aligned at this point. They were aligned but then aligned CEOs ultimately delegated to unaligned CEOs. But then I agree with Vanessa's comment.

- The CEOs want to benefit humanity but if they do things that benefit humanity they will be outcompeted. so they need to mostly invest in remaining competitive, and accept smaller and smaller benefits to humanity. But in that case can you describe what tradeoff concretely they are making, and in particular why they can't continue to take more or less the same actions to accumulate resources while remaining responsive to shareholder desires about how to use those resources?

Yes, it seems this is a good thing to hone in on. As I envision the scenario, the automated CEO is highly aligned to the point of keeping the Board locally happy with its decisions conditional on the competitive environment, but not perfectly aligned, and not automatically successful at bargaining with other companies as a result of its high alignment. (I'm not sure whether to say "aligned" or "misaligned" in your boolean-alignment-parlance.) At first the auto-CEO and the Board are having "alignment check-ins" where the auto-CEO meets with the Board and they give it input to keep it (even) more aligned than it would be without the check-ins. But eventually the Board realizes this "slow and bureaucratic check-in process" is making their company sluggish and uncompetitive, so they instruct the auto-CEO more and more to act without alignment check ins. The auto-CEO might warns them that this will decrease its overall level of per-decision alignment with them, but they say "Do it anyway; done is better than perfect" or something along those lines. All Boards wish other Boards would stop doing this, but neither they nor their CEOs manage to strike up a bargain with the rest of the world stop it. This concession by the Board—a result of failed or non-existent bargaining with other Boards [see: antitrust law]—makes the whole company less aligned with human values.

The win scenario is, of course, a bargain to stop that! Which is why I think research and discourse regarding how the bargaining will work is very high value on the margin. In other words, my position is that the best way for a marginal deep-thinking researcher to reduce the risks of these tradeoffs is not to add another brain to the task of making it easier/cheaper/faster to do alignment (which I admit would make the trade-off less tempting for the companies), but to add such a researcher to the problem of solving the bargaining/cooperation/mutual-governance problem that AI-enhanced companies (and/or countries) will be facing.

If trillion-dollar tech companies stop trying to make their systems do what they want, I will update that marginal deep-thinking researchers should allocate themselves to making alignment (the scalar!) cheaper/easier/better instead of making bargaining/cooperation/mutual-governance cheaper/easier/better. I just don't see that happening given the structure of today's global economy and tech industry.

Somehow the machine interests (e.g. building new factories, supplying electricity, etc.) are still being served. If the individual machines are aligned, and food/oxygen/etc. are in desperately short supply, then you might think an aligned AI would put the same effort into securing resources critical to human survival. Can you explain concretely what it looks like when that fails?

Yes, thanks for the question. I'm going to read your usage of "aligned" to mean "perfectly-or-extremely-well aligned with humans". In my model, by this point in the story, there has a been a gradual decrease in the scalar level of alignment of the machines with human values, due to bargaining successes on simpler objectives (e.g., «maximizing production») and bargaining failures on more complex objectives (e.g., «safeguarding human values») or objectives that trade off against production (e.g., «ensuring humans exist»). Each individual principal (e.g., Board of Directors) endorsed the gradual slipping-away of alignment-scalar (or failure to improve alignment-scalar), but wished everyone else would stop allowing the slippage.

It seems to me you are using the word "alignment" as a boolean, whereas I'm using it to refer to either a scalar ("how aligned is the system?") or a process ("the system has been aligned, i.e., has undergone a process of increasing its alignment"). I prefer the scalar/process usage, because it seems to me that people who do alignment research (including yourself) are going to produce ways of increasing the "alignment scalar", rather than ways of guaranteeing the "perfect alignment" boolean. (I sometimes use "misaligned" as a boolean due to it being easier for people to agree on what is "misaligned" than what is "aligned".) In general, I think it's very unsafe to pretend numbers that are very close to 1 are exactly 1, because e.g., 1^(10^6) = 1 whereas 0.9999^(10^6) very much isn't 1, and the way you use the word "aligned" seems unsafe to me in this way.

(Perhaps you believe in some kind of basin of convergence around perfect alignment that causes sufficiently-well-aligned systems to converge on perfect alignment, in which case it might make sense to use "aligned" to mean "inside the convergence basin of perfect alignment". However, I'm both dubious of the width of that basin, and dubious that its definition is adequately social-context-independent [e.g., independent of the bargaining stances of other stakeholders], so I'm back to not really believing in a useful Boolean notion of alignment, only scalar alignment.

I'm fine with talking about alignment as a scalar (I think we both agree that it's even messier than a single scalar). But I'm saying:

- The individual systems in your could do something different that would be much better for their principals, and they are aware of that fact, but they don't care. That is to say, they are very misaligned.

- The story is risky precisely to the extent that these systems are misaligned.

In any case, I agree profit maximization it not a perfectly aligned goal for a company, however, it is a myopically pursued goal in a tragedy of the commons resulting from a failure to agree (as you point out) on something better to do (e.g., reducing competitive pressures to maximize profits).

The systems in your story aren't maximizing profit in the form of real resources delivered to shareholders (the normal conception of "profit"). Whatever kind of "profit maximization" they are doing does not seem even approximately or myopically aligned with shareholders.

I don't think the most obvious "something better to do" is to reduce competitive pressures, it's just to actually benefit shareholders. And indeed the main mystery about your story is why the shareholders get so screwed by the systems that they are delegating to, and how to reconcile that with your view that single-single alignment is going to be a solved problem because of the incentives to solve it.

Yes, it seems this is a good thing to hone in on. As I envision the scenario, the automated CEO is highly aligned to the point of keeping the Board locally happy with its decisions conditional on the competitive environment, but not perfectly aligned [...] I'm not sure whether to say "aligned" or "misaligned" in your boolean-alignment-parlance.

I think this system is misaligned. Keeping me locally happy with your decisions while drifting further and further from what I really want is a paradigm example of being misaligned, and e.g. it's what would happen if you made zero progress on alignment and deployed existing ML systems in the context you are describing. If I take your stuff and don't give it back when you ask, and the only way to avoid this is to check in every day in a way that prevents me from acting quickly in the world, then I'm misaligned. If I do good things only when you can check while understanding that my actions lead to your death, then I'm misaligned. These aren't complicated or borderline cases, they are central example of what we are trying to avert with alignment research.

(I definitely agree that an aligned system isn't automatically successful at bargaining.)

These aren't complicated or borderline cases, they are central example of what we are trying to avert with alignment research.

I'm wondering if the disagreement over the centrality of this example is downstream from a disagreement about how easy the "alignment check-ins" that Critch talks about are. If they are the sort of thing that can be done successfully in a couple of days by a single team of humans, then I share Critch's intuition that the system in question starts off only slightly misaligned. By contrast, if they require a significant proportion of the human time and effort that was put into originally training the system, then I am much more sympathetic to the idea that what's being described is a central example of misalignment.

My (unsubstantiated) guess is that Paul pictures alignment check-ins becoming much harder (i.e. closer to the latter case mentioned above) as capabilities increase? Whereas maybe Critch thinks that they remain fairly easy in terms of number of humans and time taken, but that over time even this becomes economically uncompetitive.

Perhaps this is a crux in this debate: If you think the 'agent-agnostic perspective' is useful, you also think a relatively steady state of 'AI Safety via Constant Vigilance' is possible. This would be a situation where systems that aren't significantly inner misaligned (otherwise they'd have no incentive to care about governing systems, feedback or other incentives) but are somewhat outer misaligned (so they are honestly and accurately aiming to maximise some complicated measure of profitability or approval, not directly aiming to do what we want them to do), can be kept in check by reducing competitive pressures, building the right institutions and monitoring systems, and ensuring we have a high degree of oversight.

Paul thinks that it's basically always easier to just go in and fix the original cause of the misalignment, while Andrew thinks that there are at least some circumstances where it's more realistic to build better oversight and institutions to reduce said competitive pressures, and the agent-agnostic perspective is useful for the latter of these project, which is why he endorses it.

I think that this scenario of Safety via Constant Vigilance is worth investigating - I take Paul's later failure story to be a counterexample to such a thing being possible, as it's a case where this solution was attempted and works for a little while before catastrophically failing. This also means that the practical difference between the RAAP 1a-d failure stories and Paul's story just comes down to whether there is an 'out' in the form of safety by vigilance

I think I disagree with you on the tininess of the advantage conferred by ignoring human values early on during a multi-polar take-off. I agree the long-run cost of supporting humans is tiny, but I'm trying to highlight a dynamic where fairly myopic/nihilistic power-maximizing entities end up quickly out-competing entities with other values, due to, as you say, bargaining failure on the part of the creators of the power-maximizing entities.

Right now the United States has a GDP of >$20T, US plus its NATO allies and Japan >$40T, the PRC >$14T, with a world economy of >$130T. For AI and computing industries the concentration is even greater.

These leading powers are willing to regulate companies and invade small countries based on reasons much less serious than imminent human extinction. They have also avoided destroying one another with nuclear weapons.

If one-to-one intent alignment works well enough that one's own AI will not blatantly lie about upcoming AI extermination of humanity, then superintelligent locally-aligned AI advisors will tell the governments of these major powers (and many corporate and other actors with the capacity to activate governmental action) about the likely downside of conflict or unregulated AI havens (meaning specifically the deaths of the top leadership and everyone else in all countries).

All Boards wish other Boards would stop doing this, but neither they nor their CEOs manage to strike up a bargain with the rest of the world stop it.

Within a country, one-to-one intent alignment for government officials or actors who support the government means superintelligent advisors identify and assist in suppressing attempts by an individual AI company or its products to overthrow the government.

Internationally, with the current balance of power (and with fairly substantial deviations from it) a handful of actors have the capacity to force a slowdown or other measures to stop an outcome that will otherwise destroy them. They (and the corporations that they have legal authority over, as well as physical power to coerce) are few enough to make bargaining feasible, and powerful enough to pay a large 'tax' while still being ahead of smaller actors. And I think they are well enough motivated to stop their imminent annihilation, in a way that is more like avoiding mutual nuclear destruction than cosmopolitan altruistic optimal climate mitigation timing.

That situation could change if AI enables tiny firms and countries to match the superpowers in AI capabilities or WMD before leading powers can block it.

So I agree with others in this thread that good one-to-one alignment basically blocks the scenarios above.

Carl, thanks for this clear statement of your beliefs. It sounds like you're saying (among other things) that American and Chinese cultures will not engage in a "race-to-the-bottom" in terms of how much they displace human control over the AI technologies their companies develop. Is that right? If so, could you give me a % confidence on that position somehow? And if not, could you clarify?

To reciprocate: I currently assign a ≥10% chance of a race-to-the-bottom on AI control/security/safety between two or more cultures this century, i.e., I'd bid 10% to buy in a prediction market on this claim if it were settlable. In more detail, I assign a ≥10% chance to a scenario where two or more cultures each progressively diminish the degree of control they exercise over their tech, and the safety of the economic activities of that tech to human existence, until an involuntary human extinction event. (By comparison, I assign at most around a ~3% chance of a unipolar "world takeover" event, i.e., I'd sell at 3%.)

I should add that my numbers for both of those outcomes are down significantly from ~3 years ago due to cultural progress in CS/AI (see this ACM blog post) allowing more discussion of (and hence preparation for) negative outcomes, and government pressures to regulate the tech industry.

The US and China might well wreck the world by knowingly taking gargantuan risks even if both had aligned AI advisors, although I think they likely wouldn't.

But what I'm saying is really hard to do is to make the scenarios in the OP (with competition among individual corporate boards and the like) occur without extreme failure of 1-to-1 alignment (for both companies and governments). Competitive pressures are the main reason why AI systems with inadequate 1-to-1 alignment would be given long enough leashes to bring catastrophe. I would cosign Vanessa and Paul's comments about these scenarios being hard to fit with the idea that technical 1-to-1 alignment work is much less impactful than cooperative RL or the like.

In more detail, I assign a ≥10% chance to a scenario where two or more cultures each progressively diminish the degree of control they exercise over their tech, and the safety of the economic activities of that tech to human existence, until an involuntary human extinction event. (By comparison, I assign at most around a ~3% chance of a unipolar "world takeover" event, i.e., I'd sell at 3%.)

If this means that a 'robot rebellion' would include software produced by more than one company or country, I think that that is a substantial possibility, as well as the alternative, since competitive dynamics in a world with a few giant countries and a few giant AI companies (and only a couple leading chip firms) can mean that the way safety tradeoffs work is by one party introducing rogue AI systems that outcompete by not paying an alignment tax (and intrinsically embodying in themselves astronomically valuable and expensive IP), or cascading alignment failure in software traceable to a leading company/consortium or country/alliance.

But either way reasonably effective 1-to-1 alignment methods (of the 'trying to help you and not lie to you and murder you with human-level abilities' variety) seem to eliminate a supermajority of the risk.

[I am separately skeptical that technical work on multi-agent RL is particularly helpful, since it can be done by 1-to-1 aligned systems when they are smart, and the more important coordination problems seem to be earlier between humans in the development phase.]

The US and China might well wreck the world by knowingly taking gargantuan risks even if both had aligned AI advisors, although I think they likely wouldn't.

But what I'm saying is really hard to do is to make the scenarios in the OP (with competition among individual corporate boards and the like) occur without extreme failure of 1-to-1 alignment

I'm not sure I understand yet. For example, here’s a version of Flash War that happens seemingly without either the principals knowingly taking gargantuan risks or extreme intent-alignment failure.

-

The principals largely delegate to AI systems on military decision-making, mistakenly believing that the systems are extremely competent in this domain.

-

The mostly-intent-aligned AI systems, who are actually not extremely competent in this domain, make hair-trigger commitments of the kind described in the OP. The systems make their principals aware of these commitments and (being mostly-intent-aligned) convince their principals “in good faith” that this is the best strategy to pursue. In particular they are convinced that this will not lead to existential catastrophe.

-

The commitments are triggered as described in the OP, leading to conflict. The conflict proceeds too quickly for the principals to effectively intervene / the principals think their best bet at this point is to continue to delegate to the AIs.

-

At every step both principals and AIs think they’re doing what’s best by the respective principals’ lights. Nevertheless, due to a combination of incompetence at bargaining and structural factors (e.g., persistent uncertainty about the other side’s resolve), the AIs continue to fight to the point of extinction or unrecoverable collapse.

Would be curious to know which parts of this story you find most implausible.

Mainly such complete (and irreversible!) delegation to such incompetent systems being necessary or executed. If AI is so powerful that the nuclear weapons are launched on hair-trigger without direction from human leadership I expect it to not be awful at forecasting that risk.

You could tell a story where bargaining problems lead to mutual destruction, but the outcome shouldn't be very surprising on average, i.e. the AI should be telling you about it happening with calibrated forecasts.

Ok, thanks for that. I’d guess then that I’m more uncertain than you about whether human leadership would delegate to systems who would fail to accurately forecast catastrophe.

It’s possible that human leadership just reasons poorly about whether their systems are competent in this domain. For instance, they may observe that their systems perform well in lots of other domains, and incorrectly reason that “well, these systems are better than us in many domains, so they must be better in this one, too”. Eagerness to deploy before a more thorough investigation of the systems’ domain-specific abilities may be exacerbated by competitive pressures. And of course there is historical precedent for delegation to overconfident military bureaucracies.

On the other hand, to the extent that human leadership is able to correctly assess their systems’ competence in this domain, it may be only because there has been a sufficiently successful AI cooperation research program. For instance, maybe this research program has furnished appropriate simulation environments to probe the relevant aspects of the systems’ behavior, transparency tools for investigating cognition about other AI systems, norms for the resolution of conflicting interests and methods for robustly instilling those norms, etc, along with enough researcher-hours applying these tools to have an accurate sense of how well the systems will navigate conflict.

As for irreversible delegation — there is the question of whether delegation is in principle reversible, and the question of whether human leaders would want to override their AI delegates once war is underway. Even if delegation is reversible, human leaders may think that their delegates are better suited to wage war on their behalf once it has started. Perhaps because things are simply happening so fast for them to have confidence that they could intervene without placing themselves at a decisive disadvantage.

And I think they are well enough motivated to stop their imminent annihilation, in a way that is more like avoiding mutual nuclear destruction than cosmopolitan altruistic optimal climate mitigation timing.

In my recent writeup of an investigation into AI Takeover scenarios I made an identical comparison - i.e. that the optimistic analogy looks like avoiding nuclear MAD for a while and the pessimistic analogy looks like optimal climate mitigation:

It is unrealistic to expect TAI to be deployed if first there are many worsening warning shots involving dangerous AI systems. This would be comparable to an unrealistic alternate history where nuclear weapons were immediately used by the US and Soviet Union as soon as they were developed and in every war where they might have offered a temporary advantage, resulting in nuclear annihilation in the 1950s.

Note that this is not the same as an alternate history where nuclear near-misses escalated (e.g. Petrov, Vasili Arkhipov), but instead an outcome where nuclear weapons were used as ordinary weapons of war with no regard for the larger dangers that presented - there would be no concept of ‘near misses’ because MAD wouldn’t have developed as a doctrine. In a previous post I argued, following Anders Sandberg, that paradoxically the large number of nuclear ‘near misses’ implies that there is a forceful pressure away from the worst outcomes.

The attractor I'm pointing at with the Production Web is that entities with no plan for what to do with resources---other than "acquire more resources"---have a tendency to win out competitively over entities with non-instrumental terminal values like "humans having good relationships with their children"

Quantitatively I think that entities without instrumental resources win very, very slowly. For example, if the average savings rate is 99% and my personal savings rate is only 95%, then by the time that the economy grows 10,000x my share of the world will have fallen by about half. The levels of consumption needed to maintain human safety and current quality of life seems quite low (and the high-growth during which they have to be maintained is quite low).

Also, typically taxes transfer (way more) than that much value from high-savers to low-savers. It's not clear to me what's happening with taxes in your story. I guess you are imagining low-tax jurisdictions winning out, but again the pace at which that happens is even slower and it is dwarfed by the typical rate of expropriation from war.

I think the difference between mine and your views here is that I think we are on track to collectively fail in that bargaining problem absent significant and novel progress on "AI bargaining" (which involves a lot of fairness/transparency) and the like, whereas I guess you think we are on track to succeed?

From my end it feels like the big difference is that quantitatively I think the overhead of achieving human values is extremely low, so the dynamics you point to are too weak to do anything before the end of time (unless single-single alignment turns out to be hard). I don't know exactly what your view on this is.

If you agree that the main source of overhead is single-single alignment, then I think that the biggest difference between us is that I think that working on single-single alignment is the easiest way to make headway on that issue, whereas you expect greater improvements from some categories of technical work on coordination (my sense is that I'm quite skeptical about most of the particular kinds of work you advocate).

If you disagree, then I expect the main disagreement is about those other sources of overhead (e.g. you might have some other particular things in mind, or you might feel that unknown-unknowns are a larger fraction of the total risk, or something else).

I think I disagree with you on the tininess of the advantage conferred by ignoring human values early on during a multi-polar take-off. I agree the long-run cost of supporting humans is tiny, but I'm trying to highlight a dynamic where fairly myopic/nihilistic power-maximizing entities end up quickly out-competing entities with other values, due to, as you say, bargaining failure on the part of the creators of the power-maximizing entities.

Could you explain the advantage you are imagining? Some candidates, none of which I think are your view:

- Single-single alignment failures---e.g. it's easier to build a widget-maximizing corporation then to build one where shareholders maintain meaningful control

- Global savings rates are currently only 25%, power-seeking entities will be closer to 100%, and effective tax rates will fall(e.g. because of competition across states)

- Preserving a hospitable environment will become very expensive relative to GDP (and there are many species of this view, though none of them seem plausible to me)

I think that the biggest difference between us is that I think that working on single-single alignment is the easiest way to make headway on that issue, whereas you expect greater improvements from some categories of technical work on coordination

Yes.

(my sense is that I'm quite skeptical about most of the particular kinds of work you advocate

That is also my sense, and a major reason I suspect multi/multi delegation dynamics will remain neglected among x-risk oriented researchers for the next 3-5 years at least.

If you disagree, then I expect the main disagreement is about those other sources of overhead

Yes, I think coordination costs will by default pose a high overhead cost to preserving human values among systems with the potential to race to the bottom on how much they preserve human values.

> I think I disagree with you on the tininess of the advantage conferred by ignoring human values early on during a multi-polar take-off. I agree the long-run cost of supporting humans is tiny, but I'm trying to highlight a dynamic where fairly myopic/nihilistic power-maximizing entities end up quickly out-competing entities with other values, due to, as you say, bargaining failure on the part of the creators of the power-maximizing entities.

Could you explain the advantage you are imagining?

Yes. Imagine two competing cultures A and B have transformative AI tech. Both are aiming to preserve human values, but within A, a subculture A' develops to favor more efficient business practices (nihilistic power-maximizing) over preserving human values. The shift is by design subtle enough not to trigger leaders of A and B to have a bargaining meeting to regulate against A' (contrary to Carl's narrative where leaders coordinate against loss of control). Subculture A' comes to dominate discourse and cultural narratives in A, and makes A faster/more productive than B, such as through the development of fully automated companies as in one of the Production Web stories. The resulting advantage of A is enough for A to begin dominating or at least threatening B geopolitically, but by that time leaders in A have little power to squash A', so instead B follows suit by allowing a highly automation-oriented subculture B's to develop. These advantages are small enough not to trigger regulatory oversight, but when integrated over time they are not "tiny". This results in the gradual empowerment of humans who are misaligned with preserving human existence, until those humans also lose control of their own existence, perhaps willfully, or perhaps carelessly, or through a mix of both.

Here, the members of subculture A' are misaligned with preserving the existence of humanity, but their tech is aligned with them.

Both are aiming to preserve human values, but within A, a subculture A' develops to favor more efficient business practices (nihilistic power-maximizing) over preserving human values.

I was asking you why you thought A' would effectively outcompete B (sorry for being unclear). For example, why do people with intrinsic interest in power-maximization outcompete people who are interested in human flourishing but still invest their money to have more influence in the future?

- One obvious reason is single-single misalignment---A' is willing to deploy misaligned AI in order to get an advantage, while B isn't---but you say "their tech is aligned with them" so it sounds like you're setting this aside. But maybe you mean that A' has values that make alignment easy, while B has values that make alignment hard, and so B's disadvantage still comes from single-single misalignment even though A''s systems are aligned?

- Another advantage is that A' can invest almost all of their resources, while B wants to spend some of their resources today to e.g. help presently-living humans flourish. But quantitatively that advantage doesn't seem like it can cause A' to dominate, since B can secure rapidly rising quality of life for all humans using only a small fraction of its initial endowment.

- Wei Dai has suggested that groups with unified values might outcompete groups with heterogeneous values since homogeneous values allow for better coordination, and that AI may make this phenomenon more important. For example, if a research-producer and research-consumer have different values, then the producer may restrict access as part of an inefficient negotiation process and so they may be at a competitive disadvantage relative to a competing community where research is shared freely. This feels inconsistent with many of the things you are saying in your story, but I might be misunderstanding what you are saying and it could be that some argument like like Wei Dai's is the best way to translate your concerns into my language.

- My sense is that you have something else in mind. I included the last bullet point as a representative example to describe the kind of advantage I could imagine you thinking that A' had.

> Both [cultures A and B] are aiming to preserve human values, but within A, a subculture A' develops to favor more efficient business practices (nihilistic power-maximizing) over preserving human values.

I was asking you why you thought A' would effectively outcompete B (sorry for being unclear). For example, why do people with intrinsic interest in power-maximization outcompete people who are interested in human flourishing but still invest their money to have more influence in the future?

Ah! Yes, this is really getting to the crux of things. The short answer is that I'm worried about the following failure mode:

Failure mode: When B-cultured entities invest in "having more influence", often the easiest way to do this will be for them to invest in or copy A'-cultured-entities/processes. This increases the total presence of A'-like processes in the world, which have many opportunities to coordinate because of their shared (power-maximizing) values. Moreover, the A' culture has an incentive to trick the B culture(s) into thinking A' will not take over the world, but eventually, A' wins.

(Here's, I'm using the word "culture" to encode a mix of information subsuming utility functions, beliefs, and decision theory, cognitive capacities, and other features determining the general tendencies of an agent or collective.)

Of course, an easy antidote to this failure mode is to have A or B win instead of A', because A and B both have some human values other than power-maximizing. The problem is that this whole situation is premised on a conflict between A and B over which culture should win, and then the following observation applies:

- Wei Dai has suggested that groups with unified values might outcompete groups with heterogeneous values since homogeneous values allow for better coordination, and that AI may make this phenomenon more important.

In other words, the humans and human-aligned institutions not collectively being good enough at cooperation/bargaining risks a slow slipping-away of hard-to-express values and an easy takeover of simple-to-express values (e.g., power-maximization). This observation is slightly different from observations that "simple values dominate engineering efforts" as seen in stories about singleton paperclip maximizers. A key feature of the Production Web dynamic is now just that it's easy to build production maximizers, but that it's easy to accidentally cooperate on building a production-maximizing systems that destroy both you and your competitors.

This feels inconsistent with many of the things you are saying in your story, but

Thanks for noticing whatever you think are the inconsistencies; if you have time, I'd love for you to point them out.

I might be misunderstanding what you are saying and it could be that some argument like like Wei Dai's is the best way to translate your concerns into my language.

This seems pretty likely to me. The bolded attribution to Dai above is a pretty important RAAP in my opinion, and it's definitely a theme in the Production Web story as I intend it. Specifically, the subprocesses of each culture that are in charge of production-maximization end up cooperating really well with each other in a way that ends up collectively overwhelming the original (human) cultures. Throughout this, each cultural subprocess is doing what its "host culture" wants it to do from a unilateral perspective (work faster / keep up with the competitor cultures), but the overall effect is destruction of the host cultures (a la Prisoner's Dilemma) by the cultural subprocesses.

If I had to use alignment language, I'd say "the production web overall is misaligned with human culture, while each part of the web is sufficiently well-aligned with the human entit(ies) who interact with it that it is allowed to continue operating". Too low of a bar for "allowed to continue operating" is key to the failure mode, of course, and you and I might have different predictions about what bar humanity will actually end up using at roll-out time. I would agree, though, that conditional on a given roll-out date, improving E[alignment_tech_quality] on that date is good and complimentary to improving E[cooperation_tech_quality] on that date.

Did this get us any closer to agreement around the Production Web story? Or if not, would it help to focus on the aforementioned inconsistencies with homogenous-coordination-advantage?

Failure mode: When B-cultured entities invest in "having more influence", often the easiest way to do this will be for them to invest in or copy A'-cultured-entities/processes. This increases the total presence of A'-like processes in the world, which have many opportunities to coordinate because of their shared (power-maximizing) values. Moreover, the A' culture has an incentive to trick the B culture(s) into thinking A' will not take over the world, but eventually, A' wins.

I'm wondering why the easiest way is to copy A'---why was A' better at acquiring influence in the first place, so that copying them or investing in them is a dominant strategy? I think I agree that once you're at that point, A' has an advantage.

In other words, the humans and human-aligned institutions not collectively being good enough at cooperation/bargaining risks a slow slipping-away of hard-to-express values and an easy takeover of simple-to-express values (e.g., power-maximization).

This doesn't feel like other words to me, it feels like a totally different claim.

Thanks for noticing whatever you think are the inconsistencies; if you have time, I'd love for you to point them out.

In the production web story it sounds like the web is made out of different firms competing for profit and influence with each other, rather than a set of firms that are willing to leave profit on the table to benefit one another since they all share the value of maximizing production. For example, you talk about how selection drives this dynamic, but the firm that succeed are those that maximize their own profits and influence (not those that are willing to leave profit on the table to benefit other firms).

So none of the concrete examples of Wei Dai's economies of scale seem to actually seem to apply to give an advantage for the profit-maximizers in the production web. For example, natural monopolies in the production web wouldn't charge each other marginal costs, they would charge profit-maximizing profits. And they won't share infrastructure investments except by solving exactly the same bargaining problem as any other agents (since a firm that indiscriminately shared its infrastructure would get outcompeted). And so on.

Specifically, the subprocesses of each culture that are in charge of production-maximization end up cooperating really well with each other in a way that ends up collectively overwhelming the original (human) cultures.

This seems like a core claim (certainly if you are envisioning a scenario like the one Wei Dai describes), but I don't yet understand why this happens.

Suppose that the US and China both both have productive widget-industries. You seem to be saying that their widget-industries can coordinate with each other to create lots of widgets, and they will do this more effectively than the US and China can coordinate with each other.

Could you give some concrete example of how the US widget industry and the Chinese widget industries coordinate with each other to make more widgets, and why this behavior is selected?

For example, you might think that the Chinese and US widget industry share their insights into how to make widgets (as the aligned actors do in Wei Dai's story), and that this will cause widget-making to do better than other non-widget sectors where such coordination is not possible. But I don't see why they would do that---the US firms that share their insights freely with Chinese firms do worse, and would be selected against in every relevant sense, relative to firms that attempt to effectively monetize their insights. But effectively monetizing their insights is exactly what the US widget industry should do in order to benefit the US. So I see no reason why the widget industry would be more prone to sharing its insights

So I don't think that particular example works. I'm looking for an example of that form though, some concrete form of cooperation that the production-maximization subprocesses might engage in that allows them to overwhelm the original cultures, to give some indication for why you think this will happen in general.

> Failure mode: When B-cultured entities invest in "having more influence", often the easiest way to do this will be for them to invest in or copy A'-cultured-entities/processes. This increases the total presence of A'-like processes in the world, which have many opportunities to coordinate because of their shared (power-maximizing) values. Moreover, the A' culture has an incentive to trick the B culture(s) into thinking A' will not take over the world, but eventually, A' wins.

> In other words, the humans and human-aligned institutions not collectively being good enough at cooperation/bargaining risks a slow slipping-away of hard-to-express values and an easy takeover of simple-to-express values (e.g., power-maximization).

This doesn't feel like other words to me, it feels like a totally different claim.

Hmm, perhaps this is indicative of a key misunderstanding.

For example, natural monopolies in the production web wouldn't charge each other marginal costs, they would charge profit-maximizing profits.

Why not? The third paragraph of the story indicates that: "Companies closer to becoming fully automated achieve faster turnaround times, deal bandwidth, and creativity of negotiations." In other words, at that point it could certainly happen that two monopolies would agree to charge each other lower cost if it benefitted both of them. (Unless you'd count that as instance of "charging profit-maximizing costs"?) The concern is that the subprocesses of each company/institution that get good at (or succeed at) bargaining with other institutions are subprocesses that (by virtue of being selected for speed and simplicity) are less aligned with human existence than the original overall company/institution, and that less-aligned subprocess grows to take over the institution, while always taking actions that are "good" for the host institution when viewed as a unilateral move in an uncoordinated game (hence passing as "aligned").

At this point, my plan is try to consolidate what I think the are main confusions in the comments of this post, into one or more new concepts to form the topic of a new post.

At this point, my plan is try to consolidate what I think the are main confusions in the comments of this post, into one or more new concepts to form the topic of a new post.

Sounds great! I was thinking myself about setting aside some time to write a summary of this comment section (as I see it).

If trillion-dollar tech companies stop trying to make their systems do what they want, I will update that marginal deep-thinking researchers should allocate themselves to making alignment (the scalar!) cheaper/easier/better instead of making bargaining/cooperation/mutual-governance cheaper/easier/better. I just don't see that happening given the structure of today's global economy and tech industry.

In your story, trillion-dollar tech companies are trying to make their systems do what they want and failing. My best understanding of your position is: "Sure, but they will be trying really hard. So additional researchers working on the problem won't much change their probability of success, and you should instead work on more-neglected problems."

My position is:

- Eventually people will work on these problems, but right now they are not working on them very much and so a few people can be a big proportional difference.

- If there is going to be a huge investment in the future, then early investment and training can effectively be very leveraged. Scaling up fields extremely quickly is really difficult for a bunch of reasons.

- It seems like AI progress may be quite fast, such that it will be extra hard to solve these problems just-in-time if we don't have any idea what we are doing in advance.

- On top of all that, for many use cases people will actually be reasonably happy with misaligned systems like those in your story (that e.g. appear to be doing a good job, keep the board happy, perform well as evaluated by the best human-legible audits...). So it seems like commercial incentives may not push us to safe levels of alignment.

My best understanding of your position is: "Sure, but they will be trying really hard. So additional researchers working on the problem won't much change their probability of success, and you should instead work on more-neglected problems."

That is not my position if "you" in the story is "you, Paul Christiano" :) The closest position I have to that one is : "If another Paul comes along who cares about x-risk, they'll have more positive impact by focusing on multi-agent and multi-stakeholder issues or 'ethics' with AI tech than if they focus on intent alignment, because multi-agent and multi-stakeholder dynamics will greatly affect what strategies AI stakeholders 'want' their AI systems to pursue."

If they tried to get you to quit working on alignment, I'd say "No, the tech companies still need people working on alignment for them, and Paul is/was one of those people. I don't endorse converting existing alignment researchers to working on multi/multi delegation theory (unless they're naturally interested in it), but if a marginal AI-capabilities-bound researcher comes along, I endorse getting them set up to think about multi/multi delegation more than alignment."

Yes, you understand me here. I'm not (yet?) in the camp that we humans have "mostly" lost sight of our basic goals, but I do feel we are on a slippery slope in that regard. Certainly many people feel "used" by employers/ institutions in ways that are disconnected with their values. People with more job options feel less this way, because they choose jobs that don't feel like that, but I think we are a minority in having that choice.

I think this is an indication of the system serving some people (e.g. capitalists, managers, high-skilled labor) better than others (e.g. the median line worker). That's a really important and common complaint with the existing economic order, but I don't really see how it indicates a Pareto improvement or is related to the central thesis of your post about firms failing to help their shareholders.

(In general wage labor is supposed to benefit you by giving you money, and then the question is whether the stuff you spend money on benefits you.))

Overall, I think I agree with some of the most important high-level claims of the post:

- The world would be better if people could more often reach mutually beneficial deals. We would be more likely to handle challenges that arise, including those that threaten extinction (and including challenges posed by AI, alignment and otherwise). It makes sense to talk about "coordination ability" as a critical causal factor in almost any story about x-risk.

- The development and deployment of AI may provide opportunities for cooperation to become either easier or harder (e.g. through capability differentials, alignment failures, geopolitical disruption, or distinctive features of artificial minds). So it can be worthwhile to do work in AI targeted at making cooperation easier, even and especially for people focused on reducing extinction risks.

I also read the post as also implying or suggesting some things I'd disagree with:

- That there is some real sense in which "cooperation itself is the problem." I basically think all of the failure stories will involve some other problem that we would like to cooperate to solve, and we can discuss how well humanity cooperates to solve it (and compare "improve cooperation" to "work directly on the problem" as interventions). In particular, I think the stories in this post would basically be resolved if singles-single alignment worked well, and that taking the stories in this post seriously suggests that progress on single-single alignment makes the world better (since evidently people face a tradeoff between single-single alignment and other goals, so that progress on single-single alignment changes what point on that tradeoff curve they will end up at, and since compromising on single-single alignment appears necessary to any of the bad outcomes in this story).

- Relatedly, that cooperation plays a qualitatively different role than other kinds of cognitive enhancement or institutional improvement. I think that both cooperative improvements and cognitive enhancement operate by improving people's ability to confront problems, and both of them have the downside that they also accelerate the arrival of many of our future problems (most of which are driven by human activity). My current sense is that cooperation has a better tradeoff than some forms of enhancement (e.g. giving humans bigger brains) and worse than others (e.g. improving the accuracy of people's and institution's beliefs about the world).

- That the nature of the coordination problem for AI systems is qualitatively different from the problem for humans, or somehow is tied up with existential risk from AI in a distinctive way. I think that the coordination problem amongst reasonably-aligned AI systems is very similar to coordination problems amongst humans, and that interventions that improve coordination amongst existing humans and institutions (and research that engages in detail with the nature of existing coordination challenges) are generally more valuable than e.g. work in multi-agent RL or computational social choice.

- That this story is consistent with your prior arguments for why single-single alignment has low (or even negative) value. For example, in this comment you wrote "reliability is a strongly dominant factor decisions in deploying real-world technology, such that to me it feels roughly-correctly to treat it as the only factor." But in this story people choose to adopt technologies that are less robustly aligned because they lead to more capabilities. This tradeoff has real costs even for the person deploying the AI (who is ultimately no longer able to actually receive any profits at all from the firms in which they are nominally a shareholder). So to me your story seems inconsistent with that position and with your prior argument. (Though I don't actually disagree with the framing in this story, and I may simply not understand your prior position.)

Thanks for this synopsis of your impressions, and +1 to the two points you think we agree on.

I also read the post as also implying or suggesting some things I'd disagree with:

As for these, some of them are real positions I hold, while some are not:

- That there is some real sense in which "cooperation itself is the problem."

I don't hold that view. I the closest view I hold is more like: "Failing to cooperate on alignment is the problem, and solving it involves being both good at cooperation and good at alignment."

- Relatedly, that cooperation plays a qualitatively different role than other kinds of cognitive enhancement or institutional improvement.

I don't hold the view you attribute to me here, and I agree wholesale with the following position, including your comparisons of cooperation with brain enhancement and improving belief accuracy:

I think that both cooperative improvements and cognitive enhancement operate by improving people's ability to confront problems, and both of them have the downside that they also accelerate the arrival of many of our future problems (most of which are driven by human activity). My current sense is that cooperation has a better tradeoff than some forms of enhancement (e.g. giving humans bigger brains) and worse than others (e.g. improving the accuracy of people's and institution's beliefs about the world).

... with one caveat: some beliefs are self-fulfilling, such as cooperation/defection. There are ways of improving belief accuracy that favor defection, and ways that favor cooperation. Plausibly to me, the ways of improving belief accuracy that favor defection are worse that mo accuracy improvement at all. I'm particularly firm in this view, though; it's more of a hedge.

- That the nature of the coordination problem for AI systems is qualitatively different from the problem for humans, or somehow is tied up with existential risk from AI in a distinctive way. I think that the coordination problem amongst reasonably-aligned AI systems is very similar to coordination problems amongst humans, and that interventions that improve coordination amongst existing humans and institutions (and research that engages in detail with the nature of existing coordination challenges) are generally more valuable than e.g. work in multi-agent RL or computational social choice.

I do hold this view! Particularly the bolded part. I also agree with the bolded parts of your counterpoint, but I think you might be underestimating the value of technical work (e.g., CSC, MARL) directed at improving coordination amongst existing humans and human institutions.

I think blockchain tech is a good example of an already-mildly-transformative technology for implementing radically mutually transparent and cooperative strategies through smart contracts. Make no mistake: I'm not claiming blockchain tehc is going to "save the world"; rather, it's changing the way people cooperate, and is doing so as a result of a technical insight. I think more technical insights are in order to improve cooperation and/or the global structure of society, and it's worth spending research efforts to find them.

Reminder: this is not a bid for you personally to quit working on alignment!

- That this story is consistent with your prior arguments for why single-single alignment has low (or even negative) value. For example, in this comment you wrote "reliability is a strongly dominant factor in decisions

indeploying real-world technology, such that to me it feels roughly-correctlyto treat it as the only factor." But in this story people choose to adopt technologies that are less robustly aligned because they lead to more capabilities. This tradeoff has real costs even for the person deploying the AI (who is ultimately no longer able to actually receive any profits at all from the firms in which they are nominally a shareholder). So to me your story seems inconsistent with that position and with your prior argument. (Though I don't actually disagree with the framing in this story, and I may simply not understand your prior position.)