This is really interesting work and is presented in a way that makes it really useful for others to apply these methods to other tasks. A couple of quick questions:

- In this work, you take a clean run and patch over a specific activation from a corresponding corrupt run. If you had done this the other way around (ie. take a corrupt run and see which clean run activations nudge the model closer to the correct answer), do you think that one would find similar results? Do you think there should be a preference to the whether one patches clean --> corrupt or corrupt --> clean?

- Did you find that the corrupt dataset that you used to patch activations had a noticeable effect on the heads that appeared to be most relevant? Concretely, in the 'random answer' corrupt prompt (ie. replacing the correct answer C_def in the definition with a random word), did you find that the selection of this word mattered (ie. do you expect that selecting a word that would commonly be found in a function definition be superior to other random words in the model's vocab) or were results pretty consistent regardless?

Hi, and thanks for the comment!

Do you think there should be a preference to the whether one patches clean --> corrupt or corrupt --> clean?

Both of these show slightly different things. Imagine an "AND circuit" where the result is only correct if two attention heads are clean. If you patch clean->corrupt (inserting a clean attention head activation into a corrupt prompt) you will not find this; but you do if you patch corrupt->clean. However the opposite applies for a kind of "OR circuit". I historically had more success with corrupt->clean so I teach this as the default, however Neel Nanda's tutorials usually start the other way around, and really you should check both. We basically ran all plots with both patching directions and later picked the ones that contained all the information.

did you find that the selection of [the corrupt words] mattered?

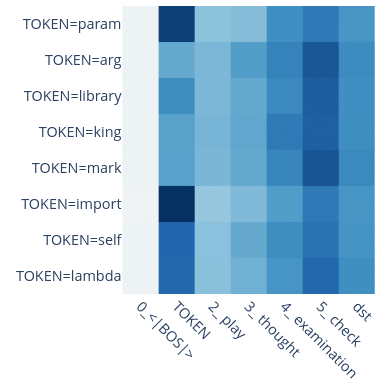

Yes! We tried to select equivalent words to not pick up on properties of the words, but in fact there was an example where we got confused by this: We at some point wanted to patch param and naively replaced it with arg, not realizing that param is treated specially! Here is a plot of head 0.2's attention pattern; it behaves differently for certain tokens. Another example is the self token: It is treated very differently to the variable name tokens.

So it definitely matters. If you want to focus on a specific behavior you probably want to pick equivalent tokens to avoid mixing in other effects into your analysis.

The model ultimately predicts the token two positions after B_def. Do we know why it doesn't also predict the token two after B_doc? This isn't obvious from the diagram; maybe there is some way for the induction head or arg copying head to either behave differently at different positions, or suppress the information from B_doc.

Produced as part of the SERI ML Alignment Theory Scholars Program under the supervision of Neel Nanda - Winter 2022 Cohort.

TL;DR: We found a circuit in a pre-trained 4-layer attention-only transformer language model. The circuit predicts repeated argument names in docstrings of Python functions, and it features

Epistemic Status: We believe that we have identified most of the core mechanics and information flow of this circuit. However our circuit only recovers up to half of the model performance, and there are a bunch of leads we didn’t follow yet.

A_def, …) as well as (b) an actual prompt (load, …). The boxes show attention heads, arranged by layer and destination position, and the arrows indicate Q, K, or V-composition between heads or embeddings. We list three less-important heads at the bottom for better clarity.Introduction

Click here to skip to the results & explanation of this circuit.

What are circuits

What do we mean by circuits? A circuit in a neural network, is a small subset of model components and model weights that (a) accounts for a large fraction of a certain behavior and (b) corresponds to a human-interpretable algorithm. A focus of the field of mechanistic interpretability is finding and better understanding the phenomena of circuits, and recently the field has focused on circuits in transformer language models. Anthropic found the small and ubiquitous Induction Head circuit in various models, and a team at Redwood found the Indirect Object Identification (IOI) circuit in GPT2-small.

How we chose the candidate task

We looked for interesting behaviors in a small, attention-only transformer with 4 layers, from Neel Nanda’s open source toy language models. It was trained on natural language and Python code. We scanned the code dataset for examples where the 4-layer model did much better than a similar 3 layer one, inspired by Neel's open problems list. Interestingly, despite the circuit seemingly requiring just 3 levels of composition, only the 4-layer model could do the task.

The docstring task

The clearest example we found was in Python docstrings, where it is possible to predict argument names in the docstring: In this randomly generated example, a function has the (randomly generated) arguments

load,size,files, andlast. The docstring convention here demands each line starting with:paramfollowed by an argument name, and this is very predictable. Turns out thatattn-only-4lis capable of this task, predicting the next token (filesin the example shown here) correctly in ~75% of cases.files. All argument names and descriptions are randomly sampled words. We've also looked into Google-style docstrings and found similar performance, but won't discuss this further here.Methods: Investigating the circuit

Possible docstring algorithms

There are multiple algorithms which could solve this task, such as

param size, check the order in the definitionsize, files, and predictfilesaccordingly.paramtoken, predict the 3rd variablefiles.load,size,files,last, and inhibit the former two. Add some preference for earlier tokens to preferfilesoverlast.We are quite certain that at least the first two algorithms are implemented to some degree. This is surprising, since one of the two should be sufficient to perform the task; we do not investigate further why this is the case. A brief investigation showed that the implementation of the 2nd algorithm seems less robust and less generalizable that our model's implementation of the first one.

For this work we isolate and focus on the first algorithm. We do this by adding additional variable names to the definition such that the line number and inhibition based methods will no longer produce the right answer.

Original docstring (randomly generated example), with added arguments :

It's worth noting that on this task the model performs worse than on the original one. That is, it doesn't predict

filesas often and when it does it's less confident in its prediction. But it's a weird prompt with a less clear answer, so this is expected. The model will choose the "correct" answerfilesin 56% of the cases (significantly above chance!), the average logit diff is 0.5.Token notation

Since our tokens are generated randomly we will just denote them as

rand0,rand1and so on, highlighting the relevant (repeated) variable names. For repeated tokens we add clarification after an underscore, i.e. whether the token isAin the definition (A_def) or docstring (A_doc), whether a comma followsAorBas,_Aor,_Brespectively, or whether we refer to theparamtoken in line 1, 2 or 3 asparam_1-3.Token notation used for axes labels in this post:

A,B,Cas well asrandtokens are randomly chosen words, butA_defandA_doc(B_defandB_doc) are the same random word. We disambiguateparamand,tokens asparam_1and,_Aetc. so that e.g. the definition contains the tokens |A_def|,_A|B_def|. The special character·makes spaces visible.Patching experiments

Our main method for investigating this circuit is activation patching. That is, overwriting the activations of a model component -- e.g. the residual stream or certain attention head outputs -- with different values. This is also referred to as resampling-ablation, differing from zero or mean-ablation in that we replace the activations in one run with those from a different run, rather than setting them to a constant.

This allows us to run the model on a pair of prompts ("clean" and "corrupted"), say with different variable names, and replace some activations in the clean run with the corresponding activations from the corrupted run. Note that this corrupted --> clean patching is the opposite direction from the commonly used clean --> corrupted patching. Our method will show us whether these activations contained important information, if that information was different between the prompts.

We only investigate how the model knows to choose a correct variable name from all words (definition and docstring arguments) available in the context. We don't focus on how it knows that predicting any variable name (instead of tokens like

def,(,,etc.) is a reasonable thing to do. To this extent weCand the highest wrong-answer logit (maximum logit of all other definition and docstring argument names including corrupted variants, i.e.A,B,rand1,rand2, ..., maximum recalculated every time).C_defin the definition with a random word.C_def(i.e.A_defandB_def) with random words. This does not change the docstring argument valuesA_docorB_doc.A_docandB_doc) with random words, without changing the definition.Running a forward pass on these corrupted prompts gives us activations where some specific piece of information is missing. Patching with these activations allows us to track where this piece of information is used.

For all experiments and visualizations (patching, attention patterns) we use a batch of 50 prompts. For each prompt we randomly generate arguments (

A,B,C) and filler tokens (rand0,rand1, ...).Results: The Docstring Circuit

In this section we will go through the three components that make up our circuit and show how we identified each of them. Most of our findings are based on patching experiments, and in each of the following 3 subsections we will use one of the three corruptions. For each corruption, we first patch the residual stream to track the information flow between layers and token positions. Then we patch the output of every head at every position to understand how that information moves.

Tracking the Flow of the answer token (

C_def)The first step is to simply replace the token that should contain the answer (i.e.

Cin the definition) with a different token, and run the model with this "corrupted" prompt, saving all activations. These saved activations cannot have any information about the real original value ofC_def(the only appearance ofCin the prompt is replaced with a different random word in the corrupted run). Thus, when we do our patching experiments, i.e. overwrite a particular set of activations in a clean run with these corrupted activations, those activations will lose any information about the correctC_defvalue.Residual Stream patching

You can see interactive versions of all of the plots in the Colab notebook.

Now for this first experiment, we patch (i.e. overwrite) the clean activations of residual stream before or after a certain layer and at a certain token position with the equivalent corrupted-run activations. We expect to see a negative change in logit difference anywhere the residual stream carries important information that is influenced by the

C_defvalue.C_deftoken value (the only information that is different in the corruption).C_defdestroys performance -- this is expected since we just overwrite theC_defembedding and make it impossible for the remainder of the model to access the correctC_defvalue.C_defvalue.param_3. This is expected since the last layer only affects the logits, but it also shows us that after layer 3, the residual stream at theparam_3position contains important information about theC_defvalue.Thus we can conclude that during layer 3 the model must have read the

C_definformation from theC_deftoken position and copied that information to theparam_3position.Attention Head patching

Next we investigate which model component is responsible for this copying of information. We know it is one of the attention heads because our model is attention-only, but even aside from this only attention heads can move information between positions.

Rather than overwriting the residual stream, we overwrite just the output of one particular attention head. We overwrite the attention head's output with the output it would have had in a corrupted run, i.e. if it had not know the true value of

C_def. We expect to see negative logit difference in all attention heads that write important information influenced by theC_defvalue.C_deftoken, most significantly heads 3.0 and 3.6.Now we see for every attention head (denoted as layer.head) whether it carries important information about the answer value

C_def, which is the corruption we use here.Most strongly we see heads 3.0 and 3.6 -- these heads are writing information about the

C_defvalue into theparam_3token position. From the previous plot we know that there must be heads in layer 3 that move information from theC_defposition into theparam_3position, and the ones we found here are good candidates. We can confirm they are indeed reading the information from theC_defposition by checking their (clean-run) attention pattern. Both heads attend to all argument names from destinationparam_3, but about twice as much toC_def. This is consistent with positive, but quite low average logit difference of 0.5 on this task.param_3. The heads clearly attend to the definition arguments, and most strongly to the correct answerC_def.Takeaway: Heads 3.0 and 3.6 move information from

C_defto the final positionparam_3, and thus the final output logits. From now on we will call these the Argument Mover Heads, as they copy the name of the argument.Side note: We can see a couple of other heads in the attention head patching plot. We don't think any of these are essential, so feel free to skip this part, but we'll briefly mention them. Head 2.3 seems to write a small amount of useful information into the final position as well, but upon inspection of its attention pattern it just attends to all definition variables without knowing which is the right answer. Furthermore, the output of a bunch of heads (0.0, 0.1, 0.5, 1.2, 2.2) writes information about the

C_defvalue into theC_defposition. We discuss the most significant ones (0.5 and 1.2) in separate sections at the end, but do not investigate the other heads in detail.Tracking the Flow of the other definition tokens (

A_def,B_def)Now that we know where the model is producing the answer, we want to find out how it knows which is the right answer. Logically we know that it must be making use of the matching preceding arguments

AandBin the definition and in the docstring to conclude which argument follows next.Residual Stream Patching

We start by repeating our patching experiments, this time replacing the definition tokens

A_defandB_defwith random argument names. (We will cover docstring tokens in the next section.) First we patch the residual stream activations again, overwriting the activations in a clean run with those from a corrupted run, before or after a certain layer and at a certain position.A_defandB_defwere replaced by random words). Colored parts must depend on the corrupted information and affect the result.We focus on the most significant effects first:

B_deftoken destroys the performance. This makes sense as the model relies onB_defandB_docvalues matching, and without knowing the value ofB_defat all the model will perform badly. We also see a small effect of replacingA_def, but this is much weaker and tells us the model relies mostly onBrather thanAandB, so we will focus on theB_definformation from now on.B_defcontaining the information about the value ofB_defand breaks if we overwrite that position before layer 1.B_defis the comma afterB_def(which we call,_B)! It is worth emphasizing here that theB_defposition will almost always contain information about theB_deftoken, even here and in later layers, but that is no longer relevant for the performance of the model. In layer 2, the model mostly relies on the,_Bposition to contain information aboutB_def.C_defposition is relevant for layer 3. This means that now only the residual stream activations of theC_defposition matter when it comes to differentiating between the clean and corruptedB_defvalues.Side notes: Looking at the less significant effects we can see (i) that between layer 1 and 2 it is actually not only the

,_Bposition but also still theB_defposition that is relevant. It seems these positions share a role here. And (ii) we notice that activations at positionsA_docandB_docare affected by thisA_def/B_defpatch, we will see that this is related to duplicate token head 1.2 and discuss it later.Attention Head patching

Now that we have seen how the information is moving from

B_defto,_Bin layer 1, toC_defin layer 2, and finally toparam_3in layer 3 we want to find out which attention heads are responsible for this. Again we patch attention head outputs, overwriting the clean outputs with those from the corrupted run.A_defandB_defwhere replace by random words. This shows us which heads' outputs into which positions are important and affected byA_defandB_defvalues.Focusing on the most significant effects again, we see exactly two heads match the criteria mentioned in the previous paragraph: Head 1.4 writes information into

,_Band head 2.0 writes intoC_def.We suspect that head 1.4 (writing into

,_B) thus reads this information from theB_defposition. We can confirm this by looking at its attention pattern and see the head indeed strongly attends toB_def.Similarly head 2.0 might be the head copying the information from

,_BtoC_defin layer 2, and indeed its attention pattern strongly attends to,_B.In terms of mechanisms, these heads just seem to attend to their previous tokens. This is a common mechanism in transformers and can easily be implemented based on the positional embeddings, these heads are usually called Previous Token Heads. However since they do not only attend to the previous but the last couple of tokens, and sometimes the current token too, we will call these heads Fuzzy Previous Token Heads here.

Finally we see the heads 3.0 and 3.6 again. As we have already seen in the last attention head patching experiment, these Argument Mover Heads copy information from

C_definto the finalparam_3position, and also explain what we see in this attention head patching plot.Takeaway: Heads 1.4 and 2.0 seem to be Fuzzy Previous Token Heads that move information about the value of the

B_deftoken from theB_defposition via the,_Bposition to theC_defposition.Side notes: Again we see head 0.5 write

B_defdependent information into theB_defposition, similarly as we saw withC_def. We also see head 1.2 writing into theA_docandB_docpositions as mentioned earlier. Both of these will be discussed at the end of this post. We also notice that patching head 2.2's output improves performance -- this is surprising, and reminds us of the "negative heads" found in the IOI paper, but we will leave this for a future investigation.Tracking the Flow of the docstring tokens (

A_doc,B_doc)Our final set of experiments in this section is concerned with analyzing how the docstring is processed in the circuit. As mentioned in the previous section, we know that the model must be using this information, so in now section we want to see

A_docandB_docin the docstringC_defas the final answerResidual Stream Patching

We start again by looking at the residual stream patching plot for a rough orientation, before we dive into the attention head patching plot.

A_docandB_docwere replaced by random words). Color shows effect of patching this part on the performance (logit difference).This looks relatively straightforward! Looking at the first row we see that the

A_docvalue isn't used at all so we ignore it again for the rest of this section -- only the value ofB_docmatters. In terms of layers and positions that are important for the result and influenced by theB_docpatch, we see it is theB_docposition before layer 1, and theparam_3position after layer 1. This means the network does not care about theB_docvalue in theB_docposition anymore after layer 1! It has read the information from there and no longer does anything task-relevant with this information at this position.Let's look for this layer 1 head that copies

B_doc-information intoparam_3in the attention head patching plot.Attention Head Patching

A_docandB_docwhere replace by random words. This shows us which heads' outputs are affected byA_docand (more importantly)B_docvalues.We immediately see head 1.4 writing into

param_3, and its output must importantly depend on the value ofB_docsince overwriting its activations with its corrupted version leads to a large change in logit difference. What is not clear is how head 1.4 is affected by theB_docvalue. Looking at its attention pattern it is clear that the head is definitely attending toB_doc:You might recognize this attention pattern as that of an Induction Head -- a head that attends to the token (

B_doc) following a previous occurrence (param_2) of the current token (param_3) -- see this post for a great explanation.Side note:

param_1is another previous occurrence of the current token followed byA_doc, which is weakly attended to as well. Head's 1.4 attention prefers later induction targets, we'll show how it does it later (it's more complicated than you think!).Induction heads rely on at least one additional head in the layer below, a Previous Token Head at the position the induction had is attending to (

B_doc). This one won't show up in our patching plot since its output depends onparam_2only, so we ran a quick patching experiment replacingparam_2and scanned the attention patterns of layer 0 heads. We see that head 0.2 is important and has the right attention pattern, thus we believe it is the (Fuzzy) Previous Token Head for the induction subcircuit, but we are less confident in this.Take away: We have found an induction circuit allowing head 1.4 to find the

B_doctoken and copy information aboutBinto theparam_3position. From there, the Argument Mover Heads 3.0 and 3.6 use this to locate the answer.Side notes: Less significant heads here are 0.5, 1.2, and 2.2. The former two will be discussed in later sections, and 2.2 (as mentioned in the previous section) won't be discussed further.

It's worth emphasizing that head 1.4 is acting as an Induction Head at this position, while it seemed to be acting like a Fuzzy Previous Tokens Head in the previous section -- from the evidence shown so far it's not clear that 1.4 isn't an Induction Head in both cases but in a dedicated section further down we will show that head 1.4 indeed behaves differently depending on the context!

Summarizing information flow

Summarizing our findings so far, we found that heads 1.4 and 2.0 act as Fuzzy Previous Tokens Heads and move information about

BfromB_defvia,_Bto theC_defposition, completing what we call the "def subcircuit".We also found Fuzzy Previous Tokens Head 0.2 and Induction Head 1.4 moving information about

Bto theparam_3position; we call this the "doc subcircuit".And finally we have the Argument Mover Heads 3.0 and 3.6 at position

param_Cwhose outputs we have shown to depend on bothB_defandB_doc, as well as the answer valueC_def. It is using thatB-information carried by the two subcircuits to attend fromparam_CtoC_defand then copy the answer value from that positionWe have illustrated all these processes in the diagram below, adding notes about Q, K and V composition as inferred from the heads behavior:

Binformation in the definition and docstring respectively, and the final argument mover heads which copy the right answer to the output.In the next sections we will cover (a) some surprising features of the circuit, as well as (b) some additional components that don't seem essential to the information flow but contribute to circuit performance.

Surprising discoveries

We encountered a couple of unexpected behaviors (head 1.4 having multiple functions, head 0.4 deriving position from causal mask) as well as a couple of heads with non-obvious functionality that we want to understand more deeply.

Multi-Function Head 1.4

Recall that the head 1.4 appears twice in our circuit. First, in the def subcircuit, acting as a Fuzzy Previous Token Head. Second, in the doc subcircuit, acting as an Induction Head.

,_Bto the previous tokenB_def.param_3toB_docthat followedparam_2.How does it do it? We investigated how much the behavior is dependent on the destination token.

Consider short prompts of the form

|BOS|S0|A|B|C|S1|, whereA,BandCare different words andS0andS1are the same "special" token. We would expect a pure Induction Head to always attend fromS1toAand a pure Previous Token Head to always attend fromS1toC. But for head 1.4 this behavior will vary depending on what we set asS0andS1. The plot below shows exactly that.|BOS|S0|A|B|C|S1|, whereA,BandCare random words andS0andS1are the same "special" token.From setting the two special tokens to

param, or to⏎······(newline with 6 spaces, which serves a similar role toparamin a different docstring style) we see an induction-like pattern (1st and 2nd row respectively). For a,the attention is mostly focused on the current token and a few tokens back. For an=it's similar except with less attention to the current token. For most other words (e.g.lemon,oil) it only attends toBOS, which we interpret as being inactive.Side note: We suspect that current token isn't even sufficient to judge the behavior. It turns out that in the prompt of the form

|BOS|,|A|B|=|,|it seems to behave as an Induction Head, even though on|BOS|,|A|B|C|,|it's a Fuzzy Previous Token Head. We believe it's useful for dealing with default argument values likesize=None, files="/home". It suggests the behavior is dependent on the wider context, not just on the destination token and probably involves some composition.Positional Information Head 0.4

We didn't know how the head 1.4 attends much more to

B_docthan toA_doc. If it was following a simple induction mechanism, then it should attend equally to both as they both follow aparamtoken.The obvious solution would be to make use of the positional embedding and attend more to recent tokens. However, when we swap the positional embeddings of

A_docandB_docbefore we feed them to 1.4, it doesn't change the attention pattern significantly. We also tested variations of this swap, such as swapping positional embeddings of the whole line, or accounting for layer 0 head outputs leaking positional embeddings, with similar results.The other source of positional information is the causal mask. We see that head 0.4 has an approximately constant attention score, and thus the attention pattern decreases with destination position, similar to what Neel Nanda found (video) in a model trained without positional embeddings.

BOS(left column) is by far the most significant, and decreases the larger the distance between the destination position andBOS.Our hypothesis is that head 0.4 provides the positional information for head 1.4 about which argument (

A_docvsB_doc) is in the most recent line, and thus correct to attend to. Testing this we plot 1.4's attention pattern for four cases: (i) the baseline clean run, and for runs where we swap (i) the positional embeddings ofA_docandB_doc, (ii) the attention pattern of head 0.4 atA_docandB_docdestination position, or (iii) both. We conclude that, indeed, the decisive factor for head 1.4 seems to be head 0.4's attention pattern.A_docandB_doc(from destinationparam_3). This is evidence that the causal attention mask in head 0.4 provides positional information that head 1.4 uses to attend to the most recent docstring line. Swapping head 0.4's attention pattern inverts 1.4's attention, while swapping the positional embedding barely affects it.Duplicate Token Head 0.5 is mostly just transforming embeddings

Head 0.5 is important for the docstring task at positions

B_def,C_def, andB_doc(up to 1.1 logit diff change). But its role can be almost entirely explained as transforming the embedding of the current token. In fact, setting its attention pattern to an identity matrix for the whole prompt loses less than 0.1 logit diff.This embedding transformation explains why head 0.5 shows up in most of our patching plots. We also expect most heads that compose with the embeddings to appear as composing with 0.5.

More generally, we expect the model to rely on the fact that head 0.5's output -- besides some positional information -- only depends on the token embedding of the current token (since any duplicate token has the same token embedding). Thus any methods looking at direct connections between heads and token embeddings may have to incorporate effects from heads like this.

The remaining 0.1 logit diff from diagonalizing head 0.5's attention comes mostly (~74%) from the attention pattern at destination

B_docand K-composition with Head 1.4. We discuss these details in the notebook, but don't focus on it here since (a) the effect is small and (b) does largely disappear if we ablate all the heads we believe not to be part of our circuit.Duplicate Token Head 1.2 is helping Argument Movers

We noticed another Duplicate Token Head, 1.2. This head attends to the current token

X_doc, previous occurrenceX_def(whereXis eitherAorB), and theBOStoken if the destination is a docstring argument. If the destination is a definition argument it only attends toX_deforBOS.We varied head 1.2's attention pattern between

BOS,X_def, andX_docand notice that only the amount of attention toBOSmatters for performance.Furthermore, based on our patching composition scores[1] we observed that head 1.2 composes with the Argument Mover Heads 3.0 and 3.6 (both heads behave similarly). So 1.2 must influence 3.0 and 3.6's attention patterns.

To analyze what it is doing we look at 3.0's attention pattern (destination

param_3) as a function of 1.2's attention pattern (destinationB_doc). We already know that the only relevant variable is how much head 1.2 attends toBOSso we vary this in the plot below:B_doc. We found that attention to sourceBOSmatters so we show 3.0's baseline attention pattern in the top panel, the relative change as a function of 1.2'sBOS-attention in the bottom panel.The baseline attention pattern of head 3.0 is attending mostly to definition arguments, but with a small bump at

B_doc. We observe that varying 1.2's attention toBOSchanges the size of this bump! So we think that head 1.2 is used to suppress the Argument Mover Heads' attention to docstring tokens and thus improves circuit performance by predicting the wrongBanswer (viaB_doc) less often.Our explanation for this, i.e. for why head 1.2's attention to

BOS(color in plot) is higher in the definition, is the following: In the docstring (as shown here) the head encounters argumentsX(whereXisAorB) as repeated tokens, so its attention is split betweenBOS,X_def, andX_docand thus attends less toBOS. In the definition, its attention is only split betweenBOSandX_defand thus attends more toBOS.This argument makes sense, and it is plausible that this is the reason for the improved performance. However we should note that this "small bump" is a relatively small issue, and the total effect of this head at

A_docorB_doc(compared to the corrupted version) is only a logit difference change of -0.12. The change atA_deforB_defis +0.05, and -0.20 atC_def. This matches with our hypothesis that head 1.2 increases attention to definition arguments, and decreases attention to docstring arguments.Putting it all together

Now we have a solid hypothesis which heads are involved in the circuit, mainly heads 1.4, 2.0, 3.0, and 3.6 for moving the information, head 0.5 for token transformation and some additional support, as well as 1.2 for additional support.

At least Previous Token Head 0.2 and Positional Information Head 0.4 (and potentially more) are also essential parts of the circuit, but won't show differ in our patching experiments. To find these and any further heads we would need to expand to a non-docstring prompt distribution, but that's not our focus here.

Our final check to test whether this circuit is sufficient for the task is to patch (i.e. resample-ablate) all other heads and see if we recover the full performance. Note that we could expand this to take into account the heads position and composition (e.g. with causal scrubbing) but stick to the more coarse-grained check for now.

Testing the circuit performance with only this set of heads (0.2, 0.4, 0.5, 1.2, 1.4, 2.0, 3.0, 3.6) we get a success rate for predicting the right argument of 42% (chance is 17%).

The full model for comparison achieves 56%, and slightly augmenting the full model by removing heads 0.0 and 2.2 gave 72%.

We found that there is one large improvement to our circuit, adding head 1.0 brings performance to 48%, and also adding heads 0.1 and 2.3 brings performance to 58%. Beyond this point we found only small performance gains per head.

Open questions & leads

We believe we have understood most of the basic circuit components and what they do, but there are still details we want to investigate in the future.

C_defwith e.g.rand2and see if patching 2.3's output has an effect (Thanks to Neel for the idea!)Summary: We found a circuit that determines argument names in Python docstrings. We selected this behaviour because a 4-layer attention-only toy model could do the task while a 3-layer one could not. We "reverse engineered" the behaviour and showed that the circuit relies on 3 levels of composition. We find several features we have not seen before in this context, such as a multi-function attention head and an attention head deriving positional information using the causal attention mask. We also notice a large number of non-crucial mechanisms that improve performance by small amounts. We also found motifs seen in other works such as our Argument Mover heads that resemble the Name Mover heads found in the IOI paper.

Accompanying Colab notebook with all interactive plots is here.

Acknowledgements: Neel Nanda substantially supported us throughout the SERIMATS program and gave very helpful feedback on our research and this draft. Furthermore Arthur Conmy, Aryan Bhatt, Eric Purdy, Marius Hobbhahn, Wes Gurnee provided valuable feedback on research ideas and the draft.

This project would not have been possible without extensive use of TransformerLens, as well as the CircuitsVis and PySvelte.

We use a new kind of composition score based on patching (point-to-point resampling ablation): Composition between head Top and head Bottom is "How much does Top's output meaningfully change when we feed it the corrupted rather than clean output of Bottom?". We plan to expand on this in a separate post, but happy to discuss!